| First roll | Second roll |

|---|---|

| 1 | 1 |

| 1 | 2 |

| 1 | 3 |

| 1 | 4 |

| 2 | 1 |

| 2 | 2 |

| 2 | 3 |

| 2 | 4 |

| 3 | 1 |

| 3 | 2 |

| 3 | 3 |

| 3 | 4 |

| 4 | 1 |

| 4 | 2 |

| 4 | 3 |

| 4 | 4 |

2 The Language of Probability

A phenomenon is random if there are multiple potential possibilities, and there is uncertainty about which possibility is realized. This chapter introduces the fundamental terminology and objects of random phenomena, including

- Possible outcomes (possibilities) of the random phenomenon

- Related events that could occur

- Random variables which measure numeric quantities based on outcomes

- Probability measures which assign degrees of likelihood or plausibility to events in a logically coherent way and reflect assumptions about the random phenomenon

- Distributions of random variables which describe their pattern of variability, and can be summarized by percentiles, expected values, standard deviations (and variances), and correlations (and covariances).

- Conditioning, which involves revising probabilities and distributions to reflect additional information

Probability models put all of the above together. A probability model of a random phenomenon consists of a sample space of possible outcomes, associated events and random variables, and a probability measure which specifies probabilities of events and determines distributions of random variables according to the assumptions of the model and available information.

Throughout this chapter we will illustrate ideas using the following examples.

Total or best? Roll a four-sided1 die twice and consider the sum and the larger of the two rolls (or the common roll if a die). Not very exciting? Maybe, but it is a familiar, simple, and concrete example. Also, a “toy” example can provide insight into more interesting problems, such as the following. In many sports, a competitor’s final ranking is based on the results of multiple attempts. Competitors in Olympic bobsled, for example, make four separate timed runs on the same course and their ranking is based on their total time. Competitors in Olympic shot put make six throws, but their ranking is based on their best throw. In sports with multiple attempts, how do the rankings compare if they are based on the total (or average) over all attempts (as in bobsled) or on the best attempt (as is shot put)?

Matching problem. A group of people all put their names in a hat for a Secret Santa gift exchange. The names are shuffled and everyone draws a name from the hat. We might be interested in questions like: What is the probability that someone selects their own name? How many people are expected to draw their own name? How do the answers to these questions depend on the name of people in the group? This a is version of a well known probability problem called the “matching problem”. The general setup involves \(n\) distinct “objects” labeled \(1, \ldots, n\) which are placed in \(n\) distinct “boxes” labeled \(1, \ldots, n\), with exactly one object placed in each box; for how many objects does the label on the object match the label on the box it is placed in?

Meeting problem. Several people plan to meet for lunch, but their arrival times are uncertain. We might be interested in whether they arrive within 15 minutes of one another, who arrives first and at what time, or how long the first person to arrive needs to wait for the others.

Collector problem. Each box of a brand of cereal contains a single prize from a collection. We might be interested in how many boxes we need to buy to complete the collection, or how many boxes we need to buy to complete five collections (say one collection for each of five kids), or which prize we get in the most boxes.

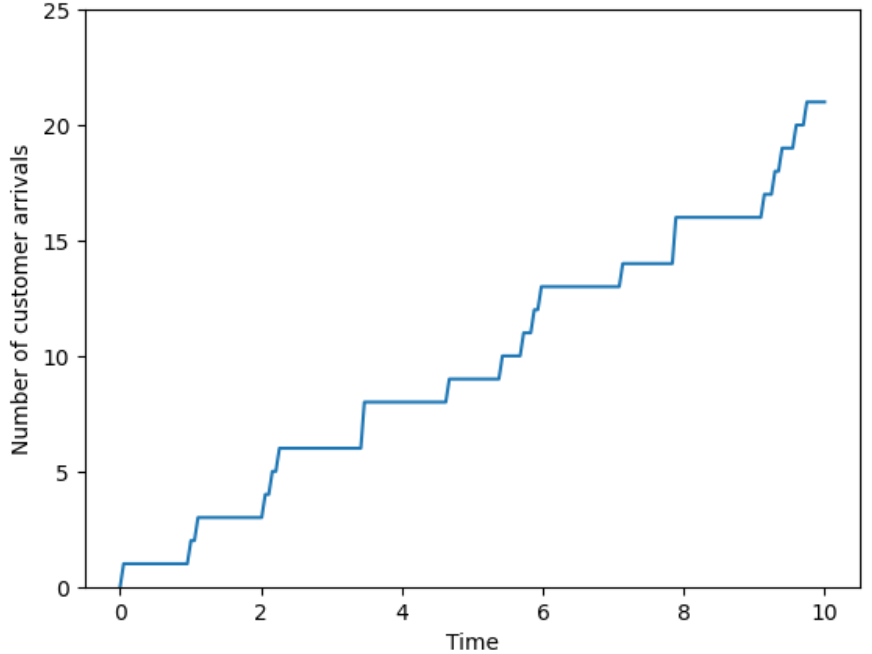

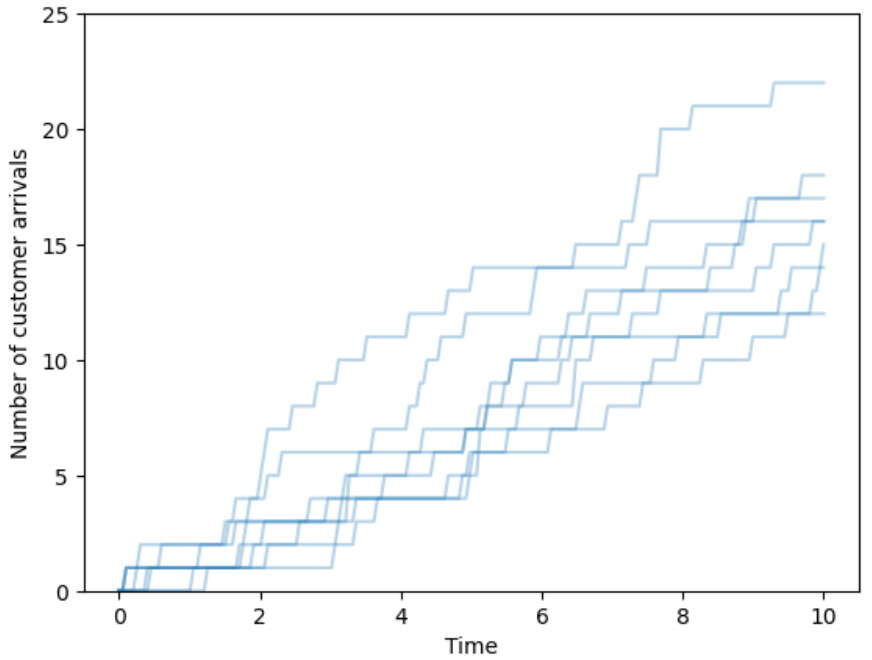

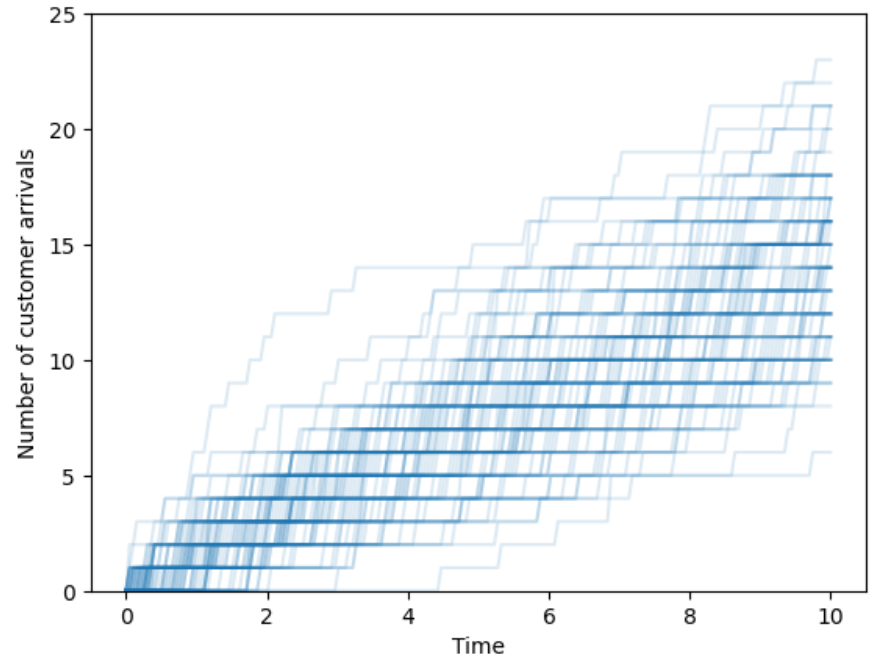

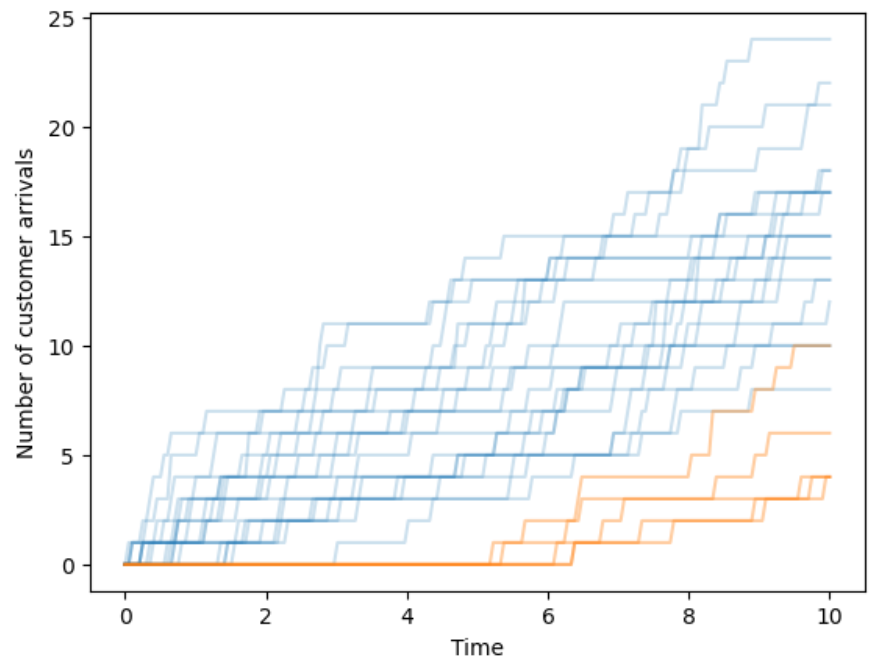

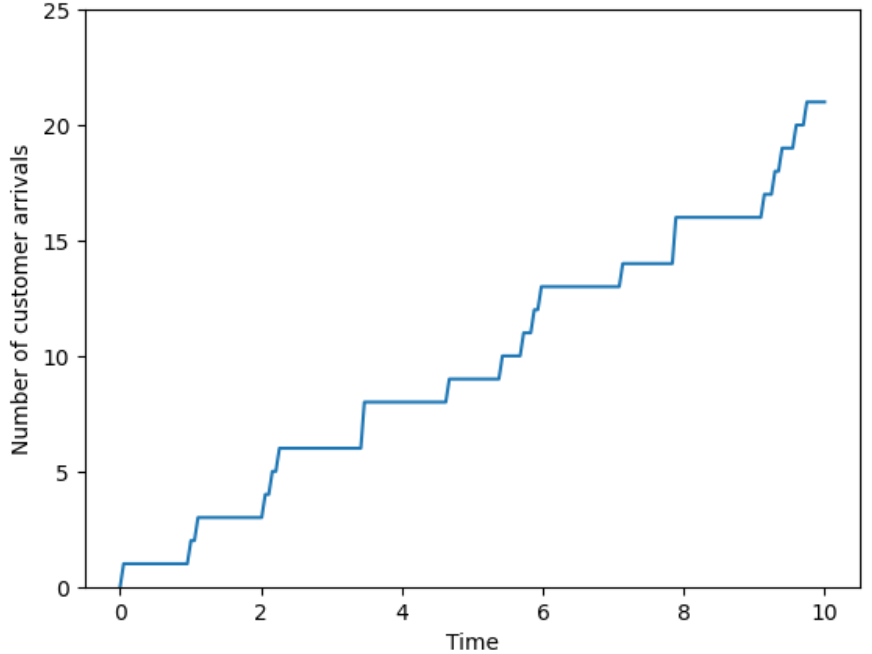

Arrivals over time. Customers enter a deli and take a number to mark their place in line. When the deli opens the counter starts 0; the first customer to arrive takes number 1, the second 2, etc. We record the counter over time, continuously, as it changes as customers arrive. We might be interested in the number of customers that arrive in some window of time, the time between customer arrivals, or the amount of time it takes for some number of customers to arrive. (And this is just the arrivals; we might also be interested in questions which involve the departures, such as: how much time a customer spends in the deli or how many customers are in the deli at a certain time.)

Full disclosure: many of the examples in this chapter involve rather dry tasks like discussing mathematical notation or listing elements of sets. Also, some of the things we do in these examples are rarely done in practice. So why bother? Many common mistakes in solving probability problems arise from misunderstanding these foundational objects. We hope that concrete—though sometimes uninteresting—examples foster understanding of fundamental concepts.

This chapter introduces what the fundamental objects of probability are, but not yet how to solve probability problems. Don’t worry; we’ll solve many interesting problems in the remaining chapters. Think of this chapter as introducing the “language” or “grammar” of probability. When first learning to write, we learn the basic elements of sentences: subjects, predicates, clauses, modifiers, etc. Understanding these fundamental building blocks is essential to learning how to write well, even if we don’t explicitly identify the subject, the verb, etc., in every sentence we write. Likewise, understanding the language of probability is crucial to learning how to solve probability problems, even if the language is sometimes unspoken.

2.1 Outcomes

Probability models can be applied to any situation in which there are multiple potential outcomes and there is uncertainty about which outcome is realized. Due to the wide variety of types of random phenomena, an outcome can be virtually anything:

- the result of a coin flip

- the results of a sequence of coin flips

- a shuffle of a deck of cards

- the weather conditions tomorrow in your city

- the path of a particular Atlantic hurricane

- the daily closing price of a certain stock over the next 30 days

- a noisy electrical signal

- the result of a diagnostic medical test

- a sample of car insurance polices

- the customers arriving at a store

- the result of an election

- the next World Series champion

- a play in a basketball game

And on and on. In particular, an outcome does not have to be a number.

The first step in defining a probability model for a random phenomenon is to identify the possible outcomes.

Definition 2.1 The sample space is the collection of all possible outcomes of a random phenomenon.

Mathematically, the sample space is a set containing all possible outcomes, while any individual outcome is an element in the sample space. The sample space is typically denoted2 \(\Omega\), the uppercase Greek letter “Omega”. An outcome is typically denoted \(\omega\), the lowercase Greek letter “omega”; \(\omega\) denotes a generic outcome much like the symbol \(u\) in \(\sqrt{u}\) denotes a generic input to the square root function. We write \(\omega \in \Omega\) (read \(\in\) as “in” or “an element of”) to represent that \(\omega\) is a possible outcome of sample space \(\Omega\).

The simplest random phenomena have just two distinct outcomes, in which case the sample space is just a set with two elements, e.g., \(\Omega=\{\text{no}, \text{yes}\}\), \(\Omega=\{\text{off}, \text{on}\}\), \(\Omega=\{0, 1\}\), \(\Omega=\{-1, 1\}\). For example, the sample space for a single coin flip could be \(\Omega = \{H, T\}\). If the coin lands on heads, we observe the outcome \(\omega = H\); if tails we observe \(\omega=T\).

In simple examples we can describe the sample space by listing all possible outcomes. However, constructing a list of all possible outcomes is rarely done in practice. We do so here only to provide some concrete examples of sample spaces. While a random phenomenon always has a corresponding sample space, in most situations the sample space of outcomes is at best only vaguely specified and can not be feasibly enumerated.

A random phenomenon is modeled by a single sample space. In Example 2.1 there was a single sample space whose outcomes represented the result of the pair of rolls; in particular, there was not a separate sample space for each of the individual rolls3. Whenever possible, a sample space outcome should be defined to provide the maximum amount of information about the outcome of random phenomenon.

Here’s another concrete example where we can list all the outcomes in the sample space. However, keep in mind that enumerating the sample space is rarely done in practice.

| Spot 1 | Spot 2 | Spot 3 | Spot 4 |

|---|---|---|---|

| 1 | 2 | 3 | 4 |

| 1 | 2 | 4 | 3 |

| 1 | 3 | 2 | 4 |

| 1 | 3 | 4 | 2 |

| 1 | 4 | 2 | 3 |

| 1 | 4 | 3 | 2 |

| 2 | 1 | 3 | 4 |

| 2 | 1 | 4 | 3 |

| 2 | 3 | 1 | 4 |

| 2 | 3 | 4 | 1 |

| 2 | 4 | 1 | 3 |

| 2 | 4 | 3 | 1 |

| 3 | 1 | 2 | 4 |

| 3 | 1 | 4 | 2 |

| 3 | 2 | 1 | 4 |

| 3 | 2 | 4 | 1 |

| 3 | 4 | 1 | 2 |

| 3 | 4 | 2 | 1 |

| 4 | 1 | 2 | 3 |

| 4 | 1 | 3 | 2 |

| 4 | 2 | 1 | 3 |

| 4 | 2 | 3 | 1 |

| 4 | 3 | 1 | 2 |

| 4 | 3 | 2 | 1 |

In the two previous examples, the sample space was discrete, in the sense that the outcomes could be enumerated in a list (though it could be a very long list). But in many cases, it is not possible to enumerate outcomes in a list, even in principle.

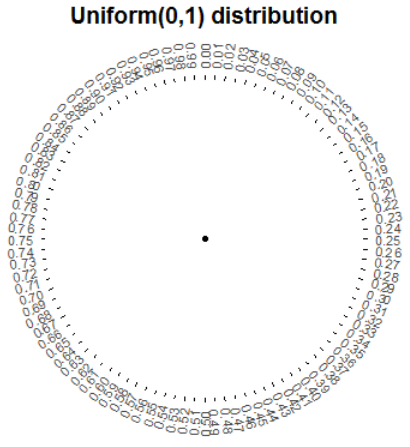

For example, consider the circular spinner (like from a kids game) in Figure 2.1. Imagine a needle anchored at the center of the circle which is spun and eventually lands pointing at a number on the outside of the circle. The values in the picture are rounded to two decimal places, but consider an idealized model where the spinner is infinitely precise and the needle infinitely fine so that any real number between 0 and 1 is a possible outcome. The sample space corresponding to a single spin of this spinner is the interval4 [0, 1]. There are uncountably many numbers in [0, 1] so it would not be possible to enumerate them in a list. The interval [0, 1] is an example of a continuous sample space.

In the previous example, outcomes were measured on a continuous scale; any real number between 0 and 60 was a possible arrival time. In practice we might round the arrival time to the nearest minute or second, but in principle and with infinite precision any real number in the continuous interval \([0, 60]\) is possible.

Furthermore, even in situations where outcomes are inherently discrete, it is often more convenient to model them as continuous. For example, if an outcome represents the annual salary in dollars of a randomly selected U.S. household, it would be more convenient to model the sample space as the continuous interval6 \([0, \infty)\) rather than discrete intervals like \(\{0, 1, 2, \ldots\}\) or \(\{0, 0.01, 0.02, \ldots\}\). Continuous models are often more tractable mathematically than discrete models.

In the previous examples, the sample space could be defined rather explicitly, either by direct enumeration or using set notation (like a Cartesian product). However, explicitly defining a sample space in a compact way is often not possible, as in the following example.

Any random phenomenon has a corresponding sample space but in some situations explicitly defining a outcome is not feasible. For example, suppose the random phenomenon is tomorrow’s weather. In order to describe an outcome, we need to specify (among other things): temperature, atmospheric pressure, wind, humidity, precipitation, and cloudiness, and how it all evolves over over the course of tomorrow, possibly in multiple locations. Representing all of this information in a compact way to define even just one outcome is virtually impossible; explicitly defining a sample space of all possible outcomes is hopeless. Regardless, the sample space is still there in the background whether we specify it or not.

Even though the sample space often is at best vaguely defined (“tomorrow’s weather”) and plays a background role, it is important to first consider what is possible before determining how probable events are. The sample space essentially defines the denominator in probability calculations. In particular, considering the sample space can help distinguish between “the particular and the general” (as discussed in Section 1.6).

2.1.1 Counting outcomes

When there are finitely many possibilities, we can ask: how many possible outcomes are there? In Example 2.1 and Example 2.2 we counted outcomes by enumerating them in a list. Of course, listing all the outcomes is unfeasible unless the sample space is very small. Now we’ll see a simple principle that can be applied to count outcomes.

All of the counting rules we will see are based on multiplying like in Example 2.6.

Lemma 2.1 (Multiplication principle for counting) Suppose that stage 1 of a process can be completed in any one of \(n_1\) ways. Further, suppose that for each way of completing the stage 1, stage 2 can be completed in any one of \(n_2\) ways. Then the two-stage process can be completed in any one of \(n_1\times n_2\) ways. This rule extends naturally to a \(\ell\)-stage process, which can then be completed in any one of \(n_1\times n_2\times n_3\times\cdots\times n_\ell\) ways.

In the multiplication principle it is not important whether there is a “first” or “second” stage. What is important is that there are distinct stages, each with its own number of “choices”. In Example 2.6, there was a bowl/cone stage, an ice cream flavor stage, and a sprinkle stage; it didn’t matter if the flavor was chosen first or second or third.

We can use the multiplication principle to verify the total number of possible outcomes for a few of our previous examples. In Example 2.1 an outcome is a pair (first roll, second roll). There are 4 possibilities for the first roll and 4 for the second, so \(4\times4 = 16\) possible pairs. In Example 2.2 an outcome is an arrangement of the 4 outcomes in the 4 spots. There are 4 possibilities for the object placed in spot 1. After placing that object, there are 3 possibilities for spot 2, then 2 possibilities for spot 3, with one object left for spot 4. So there are \(4\times3\times2\times1 = 24\) possible arrangements.

The multiplication principle provides the foundation for some other counting rules we will see later.

2.1.2 Exercises

Exercise 2.1 Consider the outcome of a sequence of 4 flips of a coin.

- Without enumerating the sample space, determine the number of outcomes.

- Enumerate the sample space and confirm the number of outcomes.

- We might be interested in the number of flips that land on heads. Explain why it is still advantageous to define the sample space as in the previous part, rather than as \(\Omega=\{0, 1, 2, 3, 4\}\).

Exercise 2.2 The latest series of collectible Lego Minifigures contains 3 different Minifigure prizes (labeled 1, 2, 3). Each package contains a single unknown prize. Suppose we only buy 3 packages and we consider as our sample space outcome the results of just these 3 packages (prize in package 1, prize in package 2, prize in package 3). For example, 323 (or (3, 2, 3)) represents prize 3 in the first package, prize 2 in the second package, prize 3 in the third package.

- Without enumerating the sample space, determine the number of outcomes.

- Enumerate the sample space and confirm the number of outcomes.

2.2 Events

An event is something that might happen or might be true. For example, if we’re interested in the weather conditions in our city tomorrow, events include

- it rains

- it does not rain

- the high temperature is 75°F (rounded to the nearest °F)

- the high temperature is above 75°F

- it rains and the high temperature is above 75°F

- it does not rain or the high temperature is not above 75°F

There are many possible outcomes for tomorrow’s weather, but each of the above will be true only for certain outcomes.

Definition 2.2 An event is a subset of the sample space. An event represents a collection of outcomes that some criteria.

The sample space is the collection of all possible outcomes; an event represents only those outcomes which satisfy some criteria. Events are typically denoted with capital letters near the start of the alphabet, with or without subscripts (e.g. \(A\), \(B\), \(C\), \(A_1\), \(A_2\)).

Mathematically, events are sets, so events can be composed from others using basic set operations like unions (\(A\cup B\)), intersections (\(A \cap B\)), and complements (\(A^c\)).

- Complements. Read \(A^c\) as “not \(A\)”, the outcomes that do not satisfy \(A\)

- Intersections. Read \(A\cap B\) as “\(A\) and \(B\)”, the outcomes that satisfy both \(A\) and \(B\)

- Unions. Read \(A \cup B\) as “\(A\) or \(B\)”, the outcomes that satisfy \(A\) or \(B\). Unions (\(\cup\), “or”) are always inclusive: \(A\cup B\) occurs if \(A\) occurs but \(B\) does not, \(B\) occurs but \(A\) does not, or both \(A\) and \(B\) occur. Note that the complement of a union is the intersection of the complements, and vice versa: \((A \cup B)^c = A^c \cap B^c\) and \((A \cap B)^c = A^c \cup B^c\),

In the weather example above we can write

- \(A\): it rains

- \(B=A^c\): it does not rain

- \(C\): the high temperature is 75°F (rounded to the nearest °F)

- \(D\): the high temperature is above 75°F

- \(E = A \cap D\): it rains and the high temperature is above 75°F

- \(F = A^c \cup D^c = (A\cap D)^c = B\cap D^c = E^c\): it does not rain or the high temperature is not above 75°F

| Team | Conference | Championships | Wins | PPG | FG3 | FG3A | FG2 | FG2A | FT | FTA |

|---|---|---|---|---|---|---|---|---|---|---|

| Detroit Pistons | Eastern | 3 | 17 | 110.3 | 11.4 | 32.4 | 28.2 | 54.6 | 19.8 | 25.7 |

| Houston Rockets | Western | 2 | 22 | 110.7 | 10.4 | 31.9 | 30.2 | 56.9 | 19.1 | 25.3 |

| San Antonio Spurs | Western | 5 | 22 | 113.0 | 11.1 | 32.2 | 32.0 | 60.4 | 15.8 | 21.2 |

| Charlotte Hornets | Eastern | 0 | 27 | 111.0 | 10.7 | 32.5 | 30.5 | 57.9 | 17.6 | 23.6 |

| Portland Trail Blazers | Western | 1 | 33 | 113.4 | 12.9 | 35.3 | 27.6 | 50.1 | 19.6 | 24.6 |

| Orlando Magic | Eastern | 0 | 34 | 111.4 | 10.8 | 31.1 | 29.8 | 55.2 | 19.6 | 25.0 |

| Indiana Pacers | Eastern | 0 | 35 | 116.3 | 13.6 | 37.0 | 28.4 | 52.6 | 18.7 | 23.7 |

| Washington Wizards | Eastern | 1 | 35 | 113.2 | 11.3 | 31.7 | 30.9 | 55.2 | 17.6 | 22.4 |

| Utah Jazz | Western | 0 | 37 | 117.1 | 13.3 | 37.8 | 29.2 | 52.0 | 18.7 | 23.8 |

| Dallas Mavericks | Western | 1 | 38 | 114.2 | 15.2 | 41.0 | 24.8 | 43.3 | 19.0 | 25.1 |

| Chicago Bulls | Eastern | 6 | 40 | 113.1 | 10.4 | 28.9 | 32.1 | 57.9 | 17.6 | 21.8 |

| Oklahoma City Thunder | Western | 1 | 40 | 117.5 | 12.1 | 34.1 | 31.0 | 58.5 | 19.2 | 23.7 |

| Toronto Raptors | Eastern | 1 | 41 | 112.9 | 10.7 | 32.0 | 31.1 | 59.3 | 18.4 | 23.4 |

| New Orleans Pelicans | Western | 0 | 42 | 114.4 | 11.0 | 30.1 | 31.1 | 57.5 | 19.3 | 24.4 |

In Example 2.8 notice that we ony said the winner was determined “at random”; we didn’t mention how. “At random” only implies that the winning team will be selected in a manner that involves uncertainly. “At random” does not necessarily imply that the 14 teams are equally likely. In fact, the 2023 NBA Draft Lottery was weighted to give teams with fewer wins the previous season a greater probability of winning the top pick. We’ll return to this idea later. For now, we’re just defining some events that are possible; later we will consider how probable they are.

If the outcomes of a sample space are represented by rows in a table, then events are subsets of rows which satisfy some criteria.

| First roll | Second roll | Sum is 4? |

|---|---|---|

| 1 | 1 | no |

| 1 | 2 | no |

| 1 | 3 | yes |

| 1 | 4 | no |

| 2 | 1 | no |

| 2 | 2 | yes |

| 2 | 3 | no |

| 2 | 4 | no |

| 3 | 1 | yes |

| 3 | 2 | no |

| 3 | 3 | no |

| 3 | 4 | no |

| 4 | 1 | no |

| 4 | 2 | no |

| 4 | 3 | no |

| 4 | 4 | no |

We reiterate (again!) that there is a single sample space, upon which all events are defined. In the above example, events that involved only the first or second roll such as \(D\) and \(E\) were still defined in terms of pairs of rolls. An outcome in a sample space should be defined to record as much information as possible so that the occurrence or non-occurrence of all events of interest can be determined.

Some events consist of a single outcome, or no outcomes at all (the “empty set” denoted \(\{\}\) or \(\emptyset\)).

Definition 2.3 Events \(A_1, A_2. A_3, \ldots\) are disjoint (a.k.a. mutually exclusive) if none of the events have any outcomes in common; that is, if \(A_i \cap A_j = \emptyset\) for all \(i\neq j\).

Roughly, disjoint events do not “overlap”. In Example 2.9, events \(B\) and \(C\) are disjoint since \(B \cap C = \emptyset\); there are no outcomes for which both the sum of the dice is at most 3 and the larger roll is a 3.

| Spot 1 | Spot 2 | Spot 3 | Spot 4 | Object 3 in spot 3? |

|---|---|---|---|---|

| 1 | 2 | 3 | 4 | yes |

| 1 | 2 | 4 | 3 | no |

| 1 | 3 | 2 | 4 | no |

| 1 | 3 | 4 | 2 | no |

| 1 | 4 | 2 | 3 | no |

| 1 | 4 | 3 | 2 | yes |

| 2 | 1 | 3 | 4 | yes |

| 2 | 1 | 4 | 3 | no |

| 2 | 3 | 1 | 4 | no |

| 2 | 3 | 4 | 1 | no |

| 2 | 4 | 1 | 3 | no |

| 2 | 4 | 3 | 1 | yes |

| 3 | 1 | 2 | 4 | no |

| 3 | 1 | 4 | 2 | no |

| 3 | 2 | 1 | 4 | no |

| 3 | 2 | 4 | 1 | no |

| 3 | 4 | 1 | 2 | no |

| 3 | 4 | 2 | 1 | no |

| 4 | 1 | 2 | 3 | no |

| 4 | 1 | 3 | 2 | yes |

| 4 | 2 | 1 | 3 | no |

| 4 | 2 | 3 | 1 | yes |

| 4 | 3 | 1 | 2 | no |

| 4 | 3 | 2 | 1 | no |

We can use the multiplication principle to count the number of outcomes that satisfy event \(A_3\) in Table 2.5. If object 3 is in spot 3, there are 3 objects that can go in spot 1, then 2 that can go in spot 2, leaving 1 for spot 4; for a total of \(3\times2\times1\times1=6\) of the 24 outcomes which satisfy event \(A_3\).

When more than just a few events are of interest, subscripts are commonly used to identify different events. In the previous example, we might also be interested in \(A_1\), the event that object 1 is placed in spot 1; \(A_2\), the event that object 2 is placed in spot 2; and so on.

Remember that intervals of real numbers such as \((a,b), [a,b], (a,b]\) are also sets, and so can also be events. For example, if an outcome is the result of a single spin of the spinner in Figure 2.1, events include

- \([0, 0.5]\), the result is between 0 and 0.5 (the needle lands in the right half of the spinner)

- \([0.75, 1]\), the result is between 0.75 and 1 (the needle lands in the northwest quarter of the spinner)

- \([0.595, 0.605)\), the result rounded to two decimal places is 0.60

- \(\{0.6\}\), the result is 0.6 exactly (the needle points exactly at 0.60000000\(\ldots\))

It is often helpful to conceptualize and visualize events (sets) with pictures, especially when dealing with continuous sample spaces.

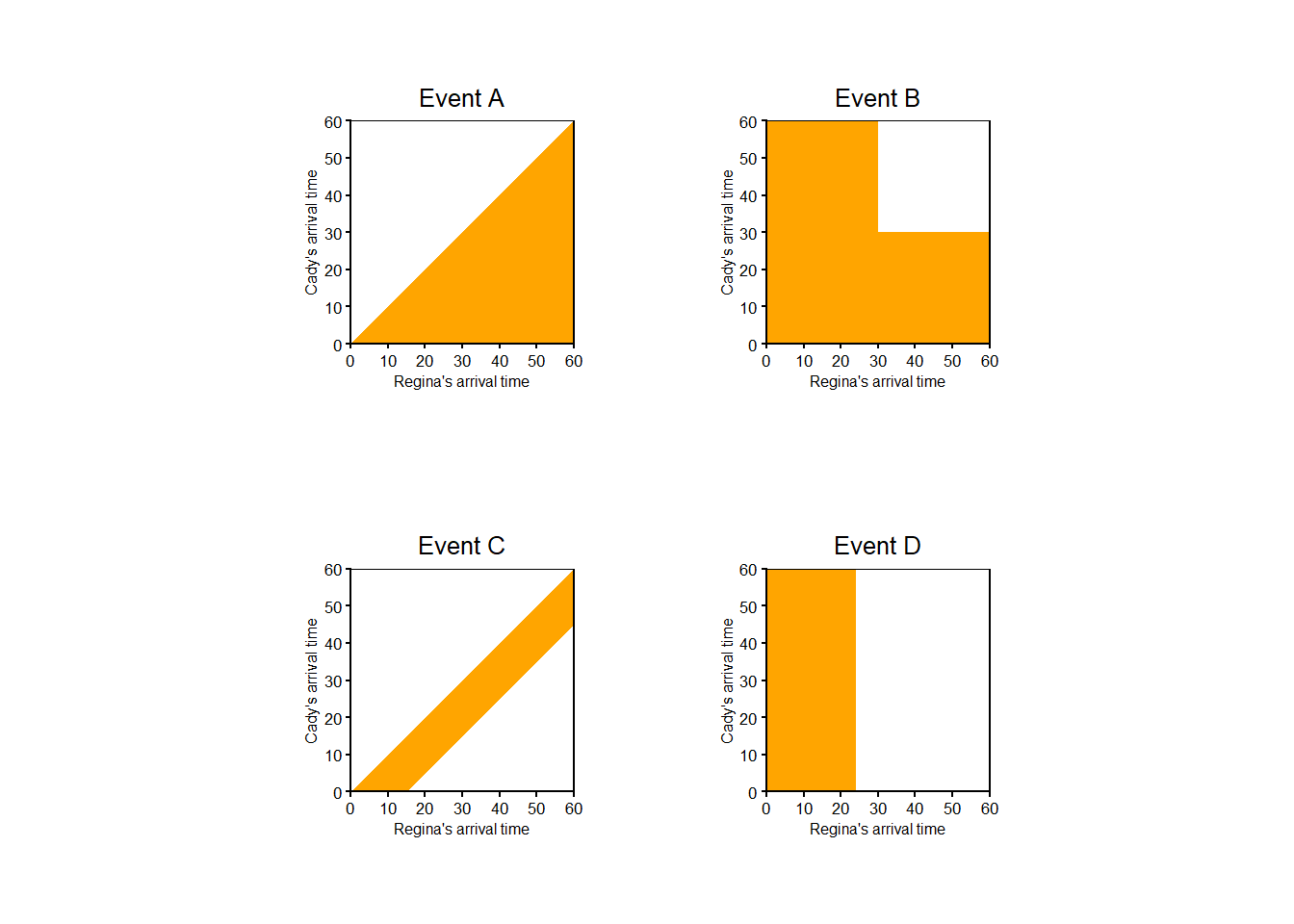

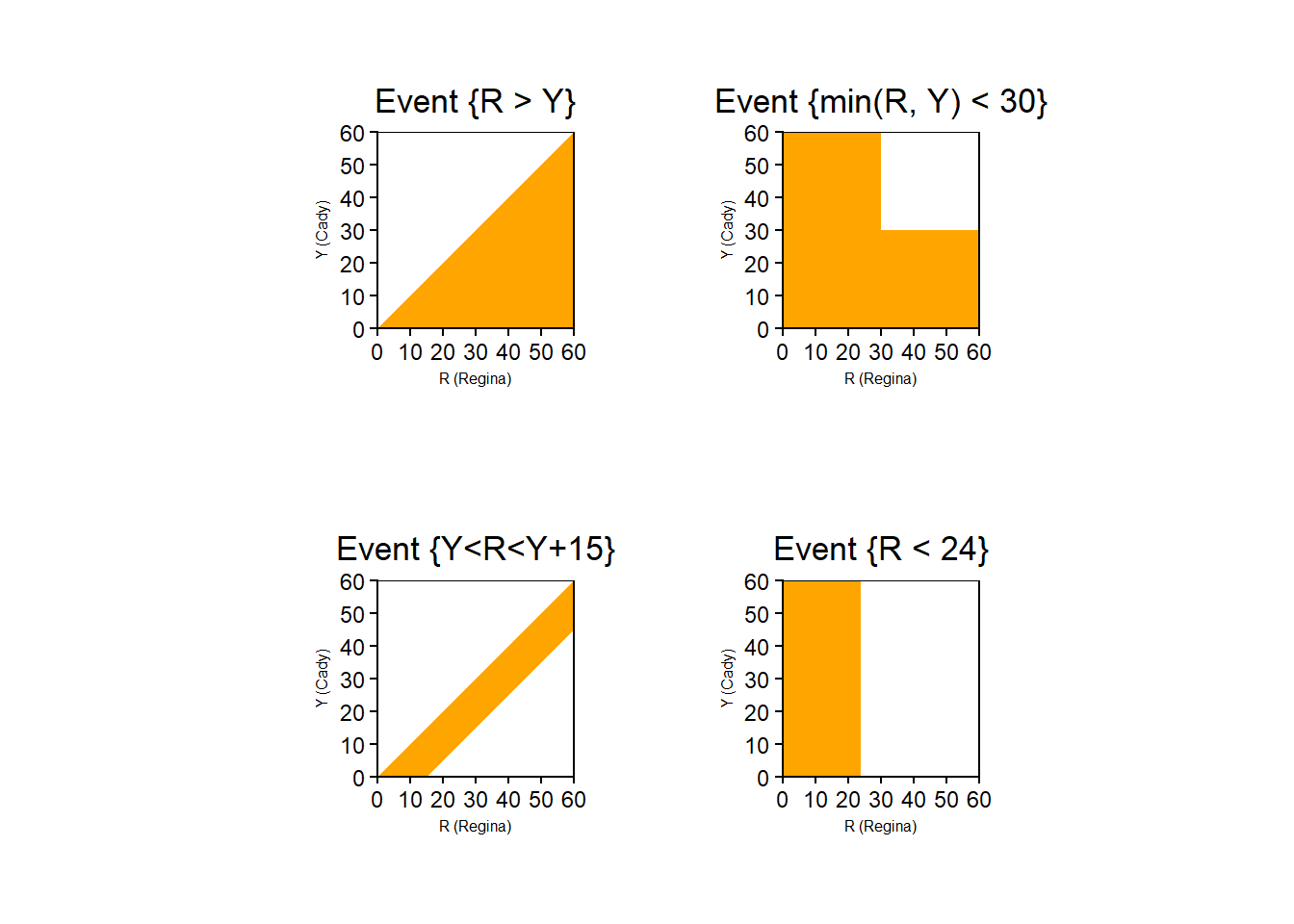

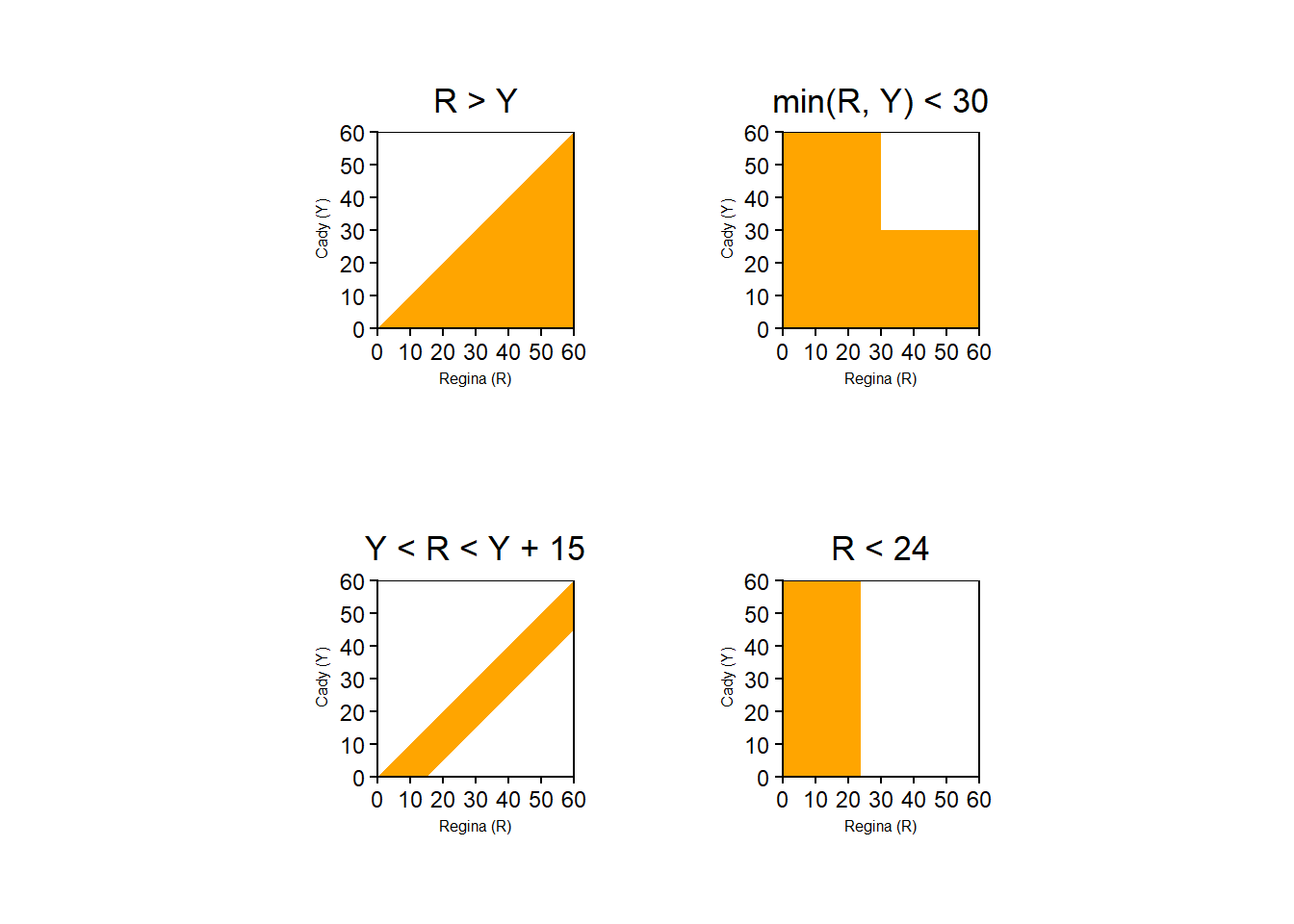

In Example 2.11 the sample space consists of (Regina, Cady) pairs of arrival times so any event must be expressed as a collection of pairs. Even though the criteria for event \(D\) involves only Regina’s arrival time, the event is not simply [0, 24]; we need to consider all (Regina, Cady) pairs for which the Regina component is in the interval [0, 24].

In many situations it is not possible to explicitly define a sample space in a compact way, and so outcomes and events are often only vaguely defined. Nevertheless, there is always a sample space in the background representing possible outcomes, and collections of these outcomes represent events of interest.

2.2.1 The collection of events of interest

An outcome is a possible realization of a random phenomenon. The sample space is the set of all possible outcomes. An event is a subset of the space space consisting of outcomes that satisfy some criteria. There are many events of interest for any random phenomenon. The collection of all events of interest is often denoted \(\mathcal{F}\).

An event \(A\) is a set. The collection \(\mathcal{F}\) of events of interest is a collection of sets. For the purposes of this text, \(\mathcal{F}\) can be considered to be the set of all subsets9 of \(\Omega\).

As an example, consider a single roll of a four-sided die.

| Event | Description | Occurs upon observing outcome \(\omega=3\)? |

|---|---|---|

| \(\emptyset\) | Roll nothing (not possible) | No |

| \(\{1\}\) | Roll a 1 | No |

| \(\{2\}\) | Roll a 2 | No |

| \(\{3\}\) | Roll a 3 | Yes |

| \(\{4\}\) | Roll a 4 | No |

| \(\{1, 2\}\) | Roll a 1 or a 2 | No |

| \(\{1, 3\}\) | Roll a 1 or a 3 | Yes |

| \(\{1, 4\}\) | Roll a 1 or a 4 | No |

| \(\{2, 3\}\) | Roll a 2 or a 3 | Yes |

| \(\{2, 4\}\) | Roll a 2 or a 4 | No |

| \(\{3, 4\}\) | Roll a 3 or a 4 | Yes |

| \(\{1, 2, 3\}\) | Roll a 1, 2, or 3 (a.k.a. do not roll a 4) | Yes |

| \(\{1, 2, 4\}\) | Roll a 1, 2, or 4 (a.k.a. do not roll a 3) | No |

| \(\{1, 3, 4\}\) | Roll a 1, 3, or 4 (a.k.a. do not roll a 2) | Yes |

| \(\{2, 3, 4\}\) | Roll a 2, 3, or 4 (a.k.a. do not roll a 1) | Yes |

| \(\{1, 2, 3, 4\}\) | Roll something | Yes |

A random phenomenon corresponds to a single sample space, but there are many events of interest. Listing the collection of all possible events as in the previous table is rarely done in practice, but we do so here to provide a concrete example of \(\mathcal{F}\).

2.2.2 Exercises

Exercise 2.3 The latest series of collectible Lego Minifigures contains 3 different Minifigure prizes (labeled 1, 2, 3). Each package contains a single unknown prize. Suppose we only buy 3 packages and we consider as our sample space outcome the results of just these 3 packages (prize in package 1, prize in package 2, prize in package 3). For example, 323 (or (3, 2, 3)) represents prize 3 in the first package, prize 2 in the second package, prize 3 in the third package.

- Let \(A_1\) be the event that prize 1 is obtained—that is, at least one of the packages contains prize 1—and define \(A_2, A_3\) similarly for prize 2, 3.

- Let \(B_1\) be the event that only prize 1 is obtained—that is, all three packages contain prize 1—and define \(B_2, B_3\) similarly for prize 2, 3.

Identify the following events as sets and interpret them in words

- \(A_1\) (hint: define \(A_1^c\) first)

- \(B_1\)

- \(A_1 \cap A_2 \cap A_3\)

- \(A_1 \cup A_2 \cup A_3\)

- \(B_1 \cap B_2 \cap B_3\)

- \(B_1 \cup B_2 \cup B_3\)

Exercise 2.4 Katniss throws a dart at a circular dartboard with radius 1 foot. (Assume that Katniss’s dart never misses the dartboard.)

Draw a picture to represent each of these events.

- \(A\), Katniss’s dart lands within 1 inch of the center of the dartboard.

- \(B\), Katniss’s dart lands more than 1 inch but less than 2 inches away from the center of the dartboard.

- \(E\), Katniss’s dart lands within 1 inch of the outside edge of the dartboard.

2.3 Random variables

Statisticians use the terms observational unit and variable. Observational units are the people, places, things, etc., for which data is observed. Variables are the measurements made on the observational units. For example, the observational units in a study could be college students, while variables could be age, high college GPA, major GPA, number of credits completed, number of Statistics courses taken, etc.

In probability, an outcome of a random phenomenon plays a role analogous to an observational unit in statistics. The sample space of outcomes is often only vaguely defined. In many situations we are less interested in detailing the outcomes themselves and more interested in whether or not certain events occur, or with measurements that we can make for the outcomes. For example, if the random phenomenon corresponds to randomly selecting a single student at a college an outcome would be the selected student, but we are more interested in quantities like the student’s GPA or number of credits completed. If we randomly select a sample of students, we are less interested in who the students are, and more interested in questions which involve variables such as what is the relationship between college GPA and major GPA? In probability, random variables play a role analogous to variables in statistics.

Definition 2.4 A random variable assigns a number measuring some quantity of interest to each outcome of a random phenomenon. That is, a random variable is a function that takes an outcome in the sample space as input and returns a number as output.

If we’re interested in the weather conditions in our city tomorrow, random variables include

- high temperature (°F)

- amount of precipitation (cm)

- humidity (%)

- maximum wind speed (mph)

Each of these quantities will take a value that depends on tomorrow’s weather conditions. Since there are a range of possibilities for tomorrow’s weather conditions, there is a range of values that each of these random variables can take.

Random variables are typically denoted by capital letters near the end of the alphabet, with or without subscripts: e.g. \(X\), \(Y\), \(Z\), or \(X_1\), \(X_2\), \(X_3\), etc.

A random variable is “variable” in the sense that it can take different values—that is, it can vary—and the value it takes is uncertain—that is, “random”.

In statistics, data is often stored in a spreadsheet or data table with rows corresponding to observational units and columns to variables. Likewise, in probability it helps to visualize a table with rows corresponding to outcomes and columns to random variables. Each outcome is associated with a value of the random variable. Since the outcome is uncertain, the value the random variable takes is also uncertain.

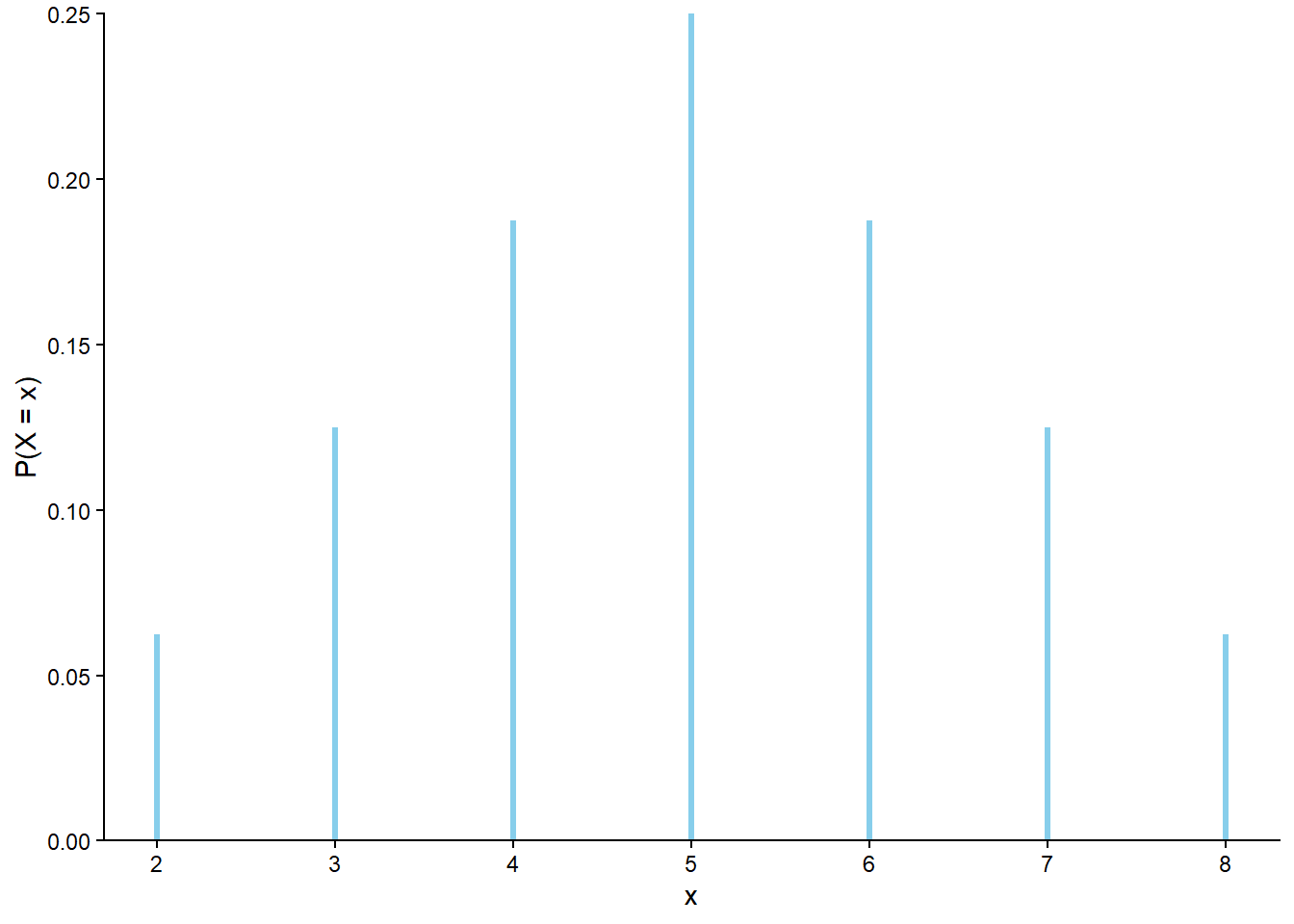

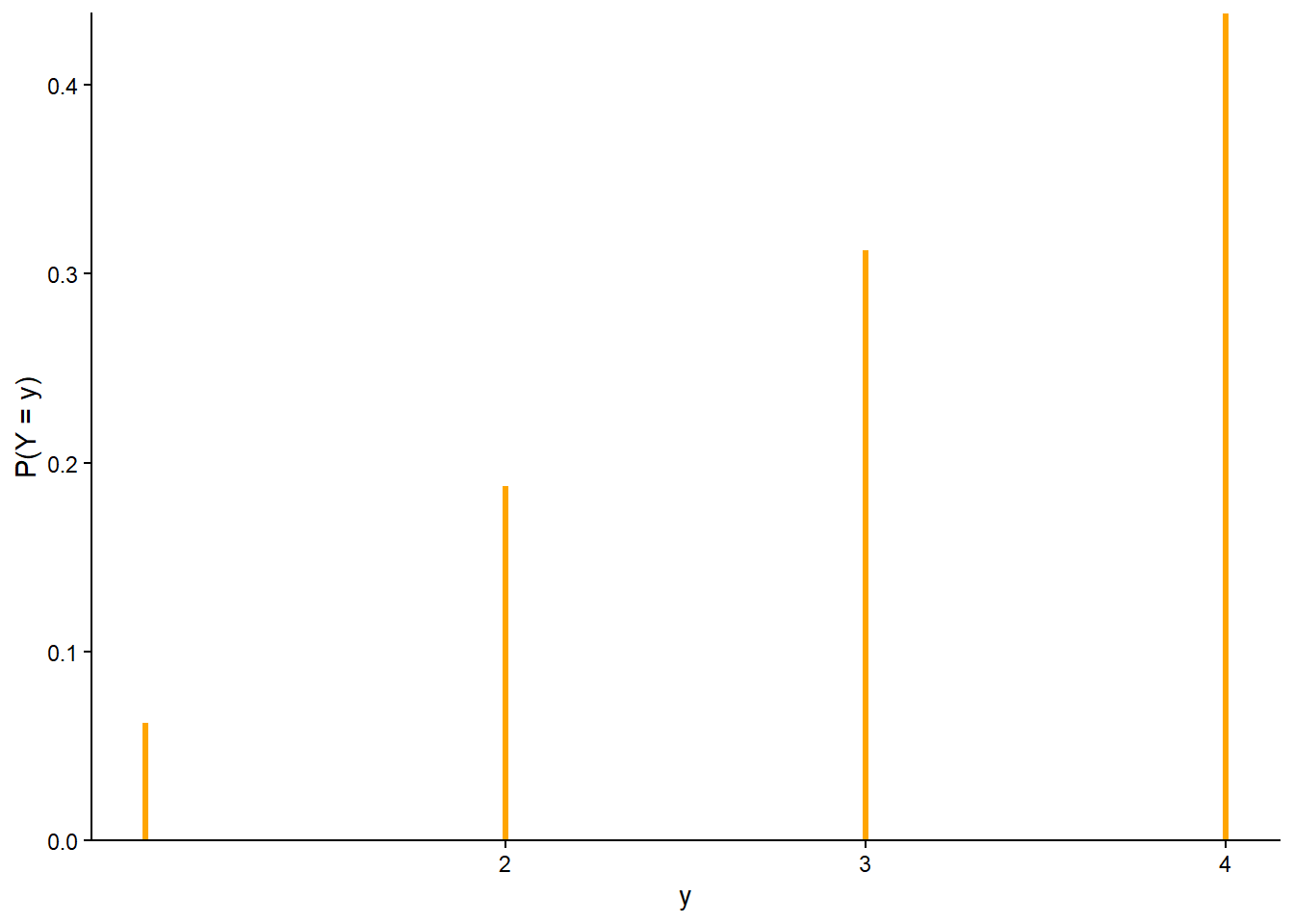

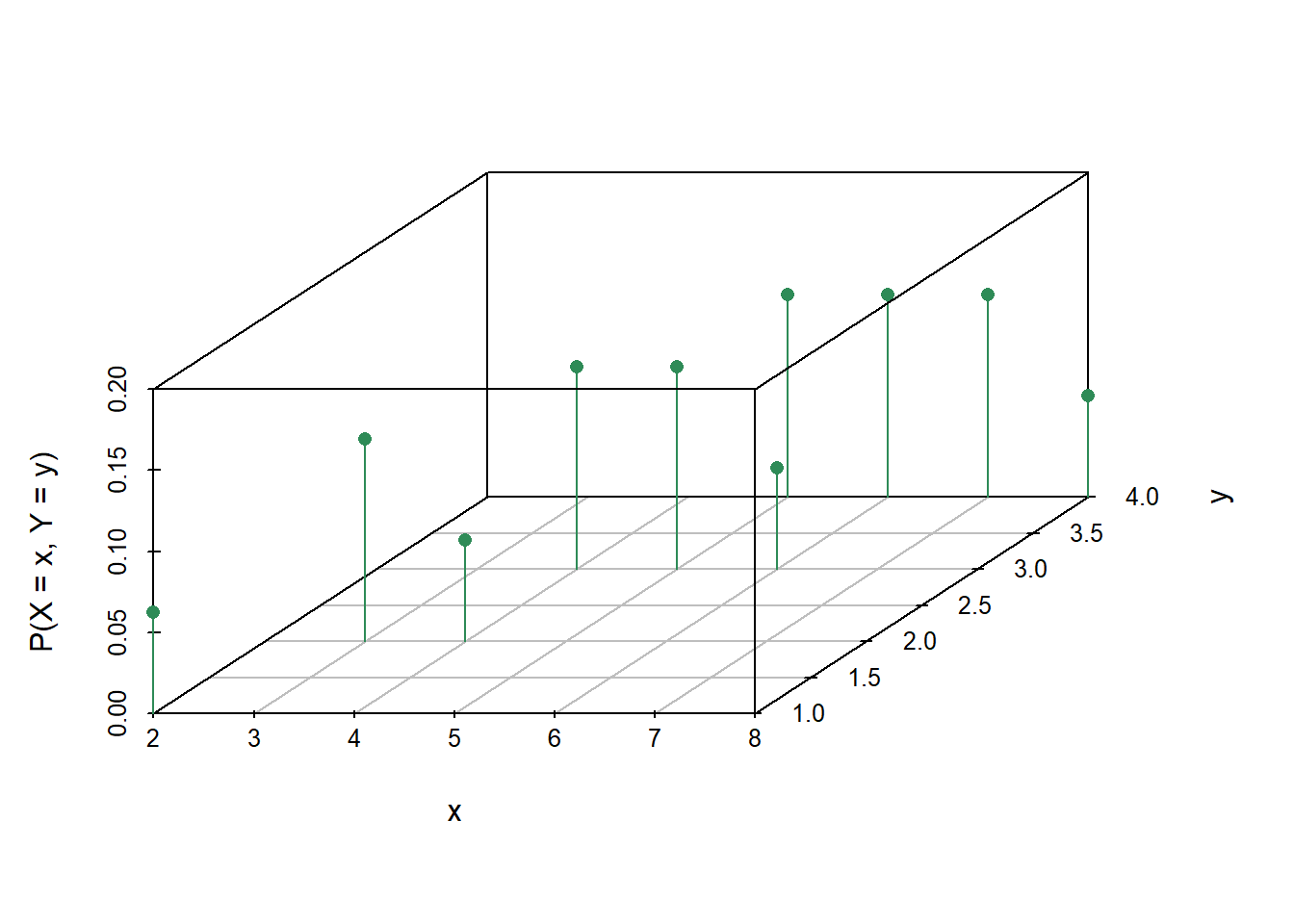

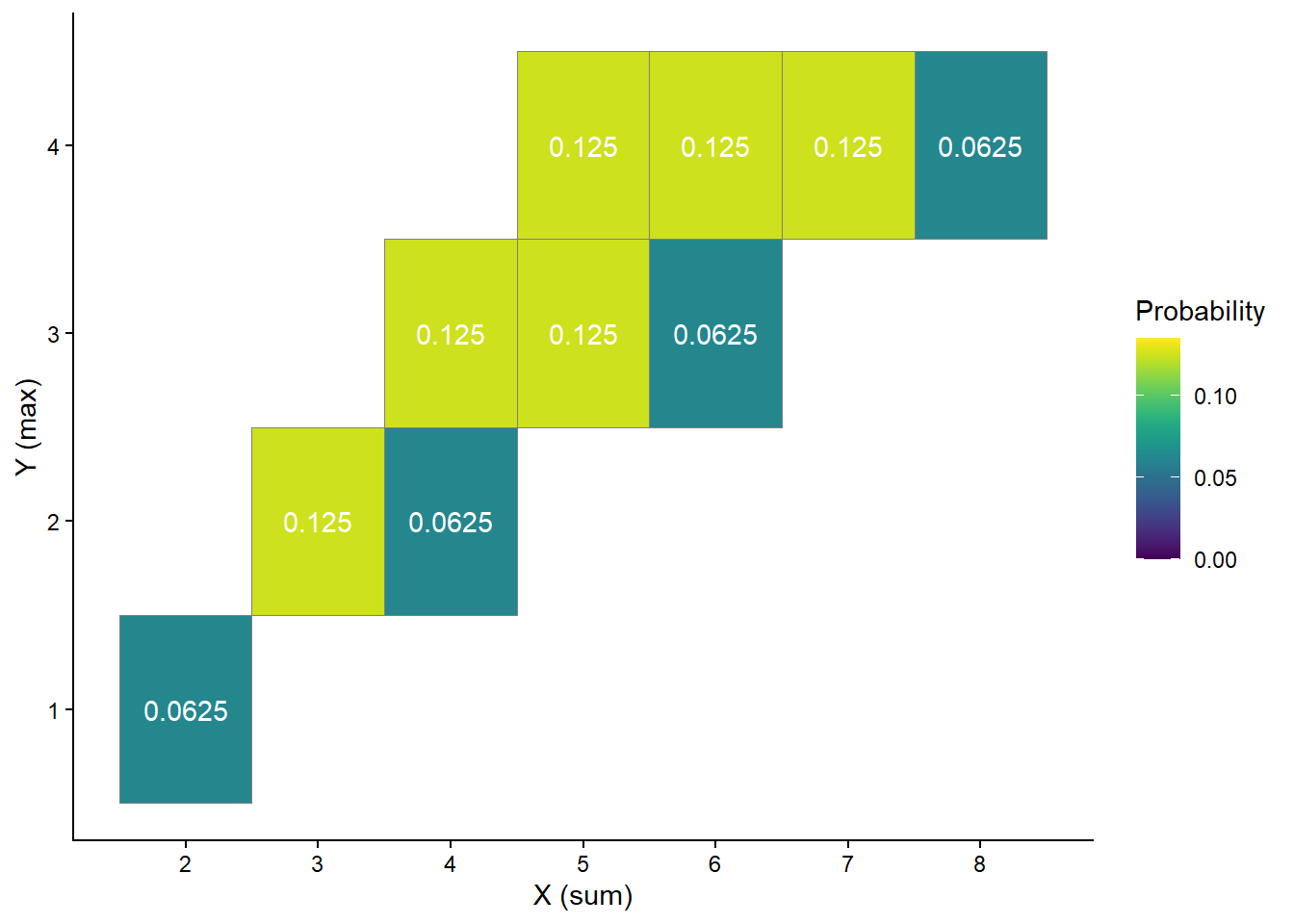

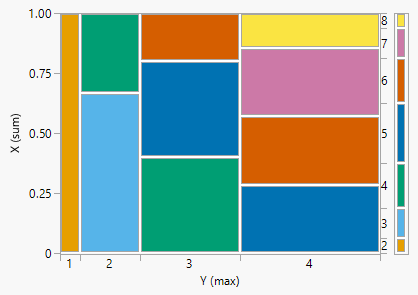

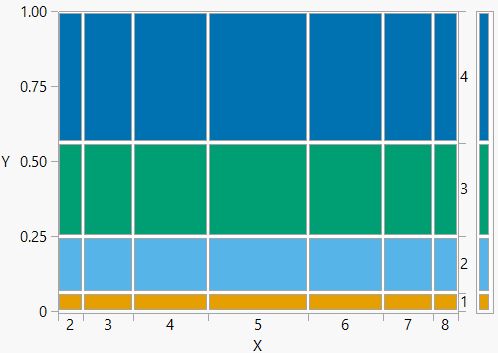

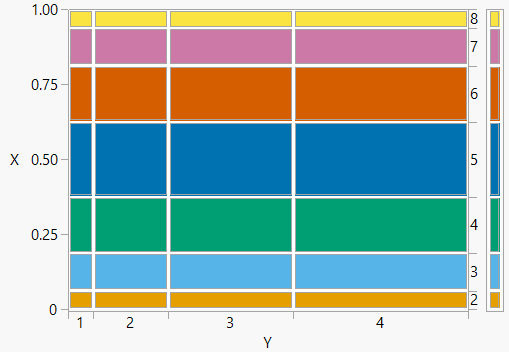

| Outcome (First roll, second roll) | X (sum) | Y (max) |

|---|---|---|

| (1, 1) | 2 | 1 |

| (1, 2) | 3 | 2 |

| (1, 3) | 4 | 3 |

| (1, 4) | 5 | 4 |

| (2, 1) | 3 | 2 |

| (2, 2) | 4 | 2 |

| (2, 3) | 5 | 3 |

| (2, 4) | 6 | 4 |

| (3, 1) | 4 | 3 |

| (3, 2) | 5 | 3 |

| (3, 3) | 6 | 3 |

| (3, 4) | 7 | 4 |

| (4, 1) | 5 | 4 |

| (4, 2) | 6 | 4 |

| (4, 3) | 7 | 4 |

| (4, 4) | 8 | 4 |

Mathematically, a random variable \(X\) is a function that takes an outcome \(\omega\) in the sample space \(\Omega\) as input and returns a number \(X(\omega)\) as output; we write \(X:\Omega\mapsto \mathbb{R}\). The random variable itself is typically denoted with a capital letter (\(X\)); possible values of that random variable are denoted with lower case letters (\(x\)). Think of the capital letter \(X\) as a label standing in for a formula like “the sum of two rolls of a four-sided die” and \(x\) as a dummy variable standing in for a particular value like 3.

In Example 2.17, the pair \((X, Y)\) is a random vector10. The output of each of \(X\) and \(Y\) is a number; the output of \((X, Y)\) is an ordered pair of numbers. A random vector is simply a vector of random variables.

One of the main reasons for modeling a sample space as the set of possible outcomes rather than the set of all possible values of some random variable is that we often want to define many random variables on the same sample space, and study relationships between them. As a statistics analogy, you would not be able to study the relationship between college GPA and major GPA unless you measured both variables for the same set of students.

2.3.1 Types of random variables

There are two main types of random variables.

- Discrete random variables take at most countably many possible values (e.g., \(0, 1, 2, \ldots\)). They are often counting variables (e.g., the number of coin flips that land on heads).

- Continuous random variables can take any real value in some interval (e.g., \([0, 1]\), \([0,\infty)\), \((-\infty, \infty)\).). That is, continuous random variables can take uncountably many different values. Continuous random variables are often measurement variables (e.g., height, weight, income).

In some problems, there are many random variables of interest, as in the following example.

2.3.2 A random variable is a function

Recall that for a mathematical function11 \(g\), given an input \(u\), the function returns a real number \(g(u)\). For example, if \(g\) is the square root function, \(g(u) = \sqrt{u}\), then \(g(9) = 3\) and \(g(10) = 3.162278...\). If the input comes from some set \(S\) (i.e. \(u\in S\)), we write \(g:S\mapsto \mathbb{R}\).

A random variable \(X\) is a function which maps each outcome \(\omega\) in the sample space \(\Omega\) to a real number \(X(\omega)\); \(X:\Omega\mapsto\mathbb{R}\). For a single outcome \(\omega\), the value \(x = X(\omega)\) is a single number; notice that \(x\) represents the output of the function \(X\) rather than the input. However, it is important to remember that the random variable \(X\) itself is a function, and not a single number.

You are probably familiar with functions expressed as simple closed form formulas of their inputs: \(g(u)=5u\), \(g(u)=u^2\), \(g(u)=\log u\), etc. While any random variable is some function, the function is rarely specified as an explicit mathematical formula of its input \(\omega\). Often, outcomes are not even numbers (e.g., sequences of coin flips), or only vaguely specified if at all (e.g., tomorrow’s weather conditions). In Example 2.17 we defined \(X\) through the words “sum of two rolls of a fair four-sided dice” instead of as a formula12.

It is more appropriate to think of a random variable as a function in the sense of a scale at a grocery store which maps a fruit to its weight, \(X: \text{fruit}\mapsto\text{weight}\). Put an apple on the scale and the scale returns a number, \(X(\text{apple})\), the weight of the apple. Likewise, \(X(\text{orange})\), \(X(\text{banana})\). The random variable \(X\) is the scale itself. This simplistic analogy assumes a sample space outcome is a single fruit. Of course, it’s even more complicated in reality since an outcome can be considered a set of fruits, so that we have for example \(X(\{\text{2 apples}, \text{3 oranges}\})\), and all fruits do not weigh the same, so that \(X(\text{this apple})\) is not the same as \(X(\text{that apple})\). But the idea is that a function is like a scale, with an input (fruits) and an output (weight). The input does not have to be a number, but the output does.

Suppose I’m going to randomly select some fruits, put them in a brown grocery bag, and place it on the scale. It wouldn’t be feasible to enumerate all the combinations of fruits I could put in the bag, but even so you know that any possible combination has some weight which could be measured by the scale. There is still a function (scale) that maps an input (fruits in the bag) to a numerical output (weight), even if that function is not explicitly specified with a mathematical formula. Now suppose I’ve selected some fruits and put the bag on the scale. Even if you can’t see what fruits are inside the bag, you can still read the weight off the scale. But even if you only observe the weight, you know there was still a background random process of putting fruits in a bag which resulted in a particular outcome having the observed weight.

The “weighing fruits in a bag” scenario in the previous paragraph illustrates how probability usually works:

- We typically don’t explicitly specify outcomes or the sample space, but we know that different outcomes can result in different values of random variables. That is, we know there is some function which maps outcomes of the random phenomenon to values of the random variable, even if we don’t have an explicit formula for the inputs to the function (sample space outcomes) or the function itself.

- We might not observe outcomes in full detail (e.g., tomorrow’s weather conditions), but we often can still observe values of random variables (e.g., tomorrow’s high temperature).

2.3.3 Tranformations of random variables

We are often interested in random variables that are derived from others. For example, if the random variable \(X\) represents the radius (cm) of a randomly selected circle, then \(Y = \pi X^2\) is a random variable representing the circle’s area (\(\text{cm}^2\)). If the random variables \(W\) and \(T\) represent the weight (kg) and height (m), respectively, of a randomly selected person, then \(S = W / T^2\) is a random variable representing the person’s body mass index (\(\text{kg}/\text{m}^2\)).

A function of a random variable is also a random variable. That is, if \(X\) is a random variable and \(g\) is a function, then \(Y=g(X)\) is also a random variable13. For example, if \(u\) is a radius of a circle, the function \(g(u) = \pi u^2\) outputs its area; if \(X\) is a random variable representing the radius of a randomly selected circle then \(Y = g(X)=\pi X^2\) is a random variable representing the circle’s area.

Sums and products, etc., of random variables defined on the same sample space are random variables. That is, if random variables \(X\) and \(Y\) are defined on the same sample space then \(X+Y\), \(X-Y\), \(XY\), and \(X/Y\) are also random variables. Similarly, it is possible to make comparisons such as \(X\ge Y\) and apply other transformations for random variables defined on the same sample space.

| Team | $W$ | $X_3$ | $Y_3$ | $X_2$ | $Y_2$ | $X_1$ | $Y_1$ | $82-W$ | $W/82$ | $X_1/Y_1$ | $\frac{X_2+X_3}{Y_2+Y_3}$ | $\frac{Y_3}{Y_2+Y_3}$ | $3X_3 + 2X_2 + X_1$ |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Detroit Pistons | 17 | 11.4 | 32.4 | 28.2 | 54.6 | 19.8 | 25.7 | 65 | 0.207 | 0.770 | 0.455 | 0.372 | 110.4 |

| Houston Rockets | 22 | 10.4 | 31.9 | 30.2 | 56.9 | 19.1 | 25.3 | 60 | 0.268 | 0.755 | 0.457 | 0.359 | 110.7 |

| San Antonio Spurs | 22 | 11.1 | 32.2 | 32.0 | 60.4 | 15.8 | 21.2 | 60 | 0.268 | 0.745 | 0.465 | 0.348 | 113.1 |

| Charlotte Hornets | 27 | 10.7 | 32.5 | 30.5 | 57.9 | 17.6 | 23.6 | 55 | 0.329 | 0.746 | 0.456 | 0.360 | 110.7 |

| Portland Trail Blazers | 33 | 12.9 | 35.3 | 27.6 | 50.1 | 19.6 | 24.6 | 49 | 0.402 | 0.797 | 0.474 | 0.413 | 113.5 |

| Orlando Magic | 34 | 10.8 | 31.1 | 29.8 | 55.2 | 19.6 | 25.0 | 48 | 0.415 | 0.784 | 0.470 | 0.360 | 111.6 |

| Indiana Pacers | 35 | 13.6 | 37.0 | 28.4 | 52.6 | 18.7 | 23.7 | 47 | 0.427 | 0.789 | 0.469 | 0.413 | 116.3 |

| Washington Wizards | 35 | 11.3 | 31.7 | 30.9 | 55.2 | 17.6 | 22.4 | 47 | 0.427 | 0.786 | 0.486 | 0.365 | 113.3 |

| Utah Jazz | 37 | 13.3 | 37.8 | 29.2 | 52.0 | 18.7 | 23.8 | 45 | 0.451 | 0.786 | 0.473 | 0.421 | 117.0 |

| Dallas Mavericks | 38 | 15.2 | 41.0 | 24.8 | 43.3 | 19.0 | 25.1 | 44 | 0.463 | 0.757 | 0.474 | 0.486 | 114.2 |

| Chicago Bulls | 40 | 10.4 | 28.9 | 32.1 | 57.9 | 17.6 | 21.8 | 42 | 0.488 | 0.807 | 0.490 | 0.333 | 113.0 |

| Oklahoma City Thunder | 40 | 12.1 | 34.1 | 31.0 | 58.5 | 19.2 | 23.7 | 42 | 0.488 | 0.810 | 0.465 | 0.368 | 117.5 |

| Toronto Raptors | 41 | 10.7 | 32.0 | 31.1 | 59.3 | 18.4 | 23.4 | 41 | 0.500 | 0.786 | 0.458 | 0.350 | 112.7 |

| New Orleans Pelicans | 42 | 11.0 | 30.1 | 31.1 | 57.5 | 19.3 | 24.4 | 40 | 0.512 | 0.791 | 0.481 | 0.344 | 114.5 |

Remember that we can visualize outcomes as rows in a spreadsheet with random variables as columns. Random variables defined on the same sample space can be put in a single spreadsheet. Each row corresponds to an outcome, and reading across any row there is a value in the column corresponding to each random variable. Random variables derived from transformations of other random variables append columns to the spreadsheet. New random variables can be defined by going row-by-row, outcome-by-outcome, and applying a transformation within each row to the values of other random variables.

Using capital letters like \(X\) or \(Y\) to denote random variables is standard practice. To help develop comfort with this mathematical notation, we will often label columns in tables with their random variable symbols (as we did in Table 2.8). Later, when writing code we will often denote random variables with symbols like X or Y. However, keep in mind that mathematical symbols like \(X\) or \(Y\) represent variables in a context. While you should develop comfort with the notation, you can—and probably should—use more informative labels like “wins” or wins rather than \(W\).

2.3.4 Indicator random variables

Random variables that only take two possible values, 0 and 1, have a special name.

Definition 2.5 An indicator (a.k.a., Bernoulli, a.k.a. Boolean) random variable can take only the values 0 or 1. If \(A\) is an event then the corresponding indicator random variable \(\textrm{I}_A\) is defined as \[ \textrm{I}_A(\omega) = \begin{cases} 1, & \omega \in A,\\ 0, & \omega \notin A \end{cases} \] That is, \(\textrm{I}_A\) equals 1 if event \(A\) occurs, and \(\textrm{I}_A\) equals 0 if event \(A\) does not occur.

Indicators provide the bridge between events (sets) and random variables (functions). Any event either occurs or not; a realization of any event is either true (\(\omega \in A\)) or false (\(\omega \notin A\)). An indicator random variable just translates “true” or “false” into numbers, 1 for “true” and 0 for “false”.

| Outcome | $X$ | $I_1$ | $I_2$ | $I_3$ | $I_4$ |

|---|---|---|---|---|---|

| 1234 | 4 | 1 | 1 | 1 | 1 |

| 1243 | 2 | 1 | 1 | 0 | 0 |

| 1324 | 2 | 1 | 0 | 0 | 1 |

| 1342 | 1 | 1 | 0 | 0 | 0 |

| 1423 | 1 | 1 | 0 | 0 | 0 |

| 1432 | 2 | 1 | 0 | 1 | 0 |

| 2134 | 2 | 0 | 0 | 1 | 1 |

| 2143 | 0 | 0 | 0 | 0 | 0 |

| 2314 | 1 | 0 | 0 | 0 | 1 |

| 2341 | 0 | 0 | 0 | 0 | 0 |

| 2413 | 0 | 0 | 0 | 0 | 0 |

| 2431 | 1 | 0 | 0 | 1 | 0 |

| 3124 | 1 | 0 | 0 | 0 | 1 |

| 3142 | 0 | 0 | 0 | 0 | 0 |

| 3214 | 2 | 0 | 1 | 0 | 1 |

| 3241 | 1 | 0 | 1 | 0 | 0 |

| 3412 | 0 | 0 | 0 | 0 | 0 |

| 3421 | 0 | 0 | 0 | 0 | 0 |

| 4123 | 0 | 0 | 0 | 0 | 0 |

| 4132 | 1 | 0 | 0 | 1 | 0 |

| 4213 | 1 | 0 | 1 | 0 | 0 |

| 4231 | 2 | 0 | 1 | 1 | 0 |

| 4312 | 0 | 0 | 0 | 0 | 0 |

| 4321 | 0 | 0 | 0 | 0 | 0 |

Even though they seem simple, indicator random variables are very useful. In the matching problem, it is not feasible to enumerate the outcomes and count when there is a large number \(n\) of items and spots. Using indicators allows you to count incrementally—is just this item in the correct spot?— rather than all at once. Representing a count as a sum of indicator random variables is a very common and useful strategy, especially in problems that involve “find the expected number of…”

Here is a little story that illustrates the idea of incremental counting with indicators. Imagine a dad and his young child are reading a picture book. They come to a page that has twenty pictures of fruits, of which seven are bananas. The following conversation ensues.

- Dad: Can you count all the bananas? Let’s see! How many bananas have we counted so far?

- Kid: We haven’t started counting yet!

- Dad: Right, so how many bananas have we counted so far?

- Kid: Zero.

- Dad: That’s right! We’ve counted zero bananas so far. (Dad points to a banana.) Is that a banana?

- Kid: Yes!

- Dad: So how many more bananas did we just count?

- Kid: One more.

- Dad: So how many bananas have we counted so far?

- Kid: One.

- Dad: Great job! We’ve counted one banana so far. (Dad points to a different banana.) Is that a banana?

- Kid: Yes!

- Dad: So how many more bananas did we just count?

- Kid: We counted one more banana.

- Dad: So how many bananas have we counted so far?

- Kid: Two.

- Dad: Great job! We’ve counted two bananas so far. (Dad points to a different banana.) Is that a banana?

- Kid: Yes!

- Dad: So how many more bananas did we just count?

- Kid: We counted one more banana.

- Dad: So how many bananas have we counted so far?

- Kid: Three.

- Dad: Great job! We’ve counted three bananas so far. (Dad points to an orange14.) Is that a banana?

- Kid: No, that’s an orange!

- Dad: So how many more bananas did we just count?

- Kid: Zero. It was not a banana!

- Dad: So how many bananas have we counted so far?

- Kid: Still three.

- Dad: Great job! We’ve counted three bananas so far. (Continues in this manner until Dad points to the twentieth and last fruit on the page, a banana.) Almost done. We’ve counted six bananas so far. Is that a banana?

- Kid: Yes!

- Dad: So how many more bananas did we just count?

- Kid: We counted one more banana.

- Dad: So how many bananas have we counted so far?

- Kid: Seven.

- Dad: We looked at each fruit on the page. How many were bananas?

- Kid: Seven.

- Dad: Great job! Now you know how indicator random variables can be used to count.

In the story, the kid counted the bananas by examining each object, determining whether or not it was a banana, and then incrementing the banana counter by 1 for each object that was a banana (and by 0 for the objects that were not bananas). The kid essentially created an indicator (of “banana”) variable for each object on the page (\(I_{B_1}=1\), \(I_{B_2}=1\), \(I_{B_3}=1\), \(I_{B_4}=0\ldots\), \(I_{B_{20}}=1\)) and then summed these indicators to obtain the total count of bananas. This strategy gives a way of breaking down a complicated counting problem into smaller pieces and counting incrementally.

Example 2.23 illustrates that for two events \(A\) and \(B\) \[\begin{align*} \textrm{I}_{A^c} & = 1 - \textrm{I}_A & & \\ \textrm{I}_{A \cap B} & = \textrm{I}_A \textrm{I}_B & & =\min(\textrm{I}_A, \textrm{I}_B)\\ \textrm{I}_{A \cup B} & = \textrm{I}_A + \textrm{I}_B - \textrm{I}_{A \cap B} & & = \max(I_A, I_B) \end{align*}\]

In particular, the indicator of an intersection is the product of the indicators of each event. The \(\min, \max\), and product formulas work for more than two events, but the addition formula is more complicated15.

2.3.5 Events involving random variables

Many events of interest involve random variables. The event “tomorrow’s high temperature is above 75°F” involves the random variable “tomorrow’s high temperature”. Each possible outcome of tomorrow’s weather conditions will correspond to a value of high temperature, but only some of these outcomes will result in values of high temperature above 75 °F.

The expressions \(X=x\) or \(\{X=x\}\) are shorthand for the event that the random variable \(X\) takes the value \(x\). Remember that any event is a collection of outcomes that satisfy some criteria, a subset of the sample space. So objects like \(\{X=x\}\) are sets representing the outcomes for which the value of the random variable \(X\) is equal to the number \(x\). Remember to think of the capital letter \(X\) as a label standing in for a formula like “the sum of two rolls of a four-sided die” and \(x\) as a dummy variable standing in for a particular value like 3.

| Outcome (First roll, second roll) | X (sum) | Y (max) |

|---|---|---|

| (1, 1) | 2 | 1 |

| (1, 2) | 3 | 2 |

| (1, 3) | 4 | 3 |

| (1, 4) | 5 | 4 |

| (2, 1) | 3 | 2 |

| (2, 2) | 4 | 2 |

| (2, 3) | 5 | 3 |

| (2, 4) | 6 | 4 |

| (3, 1) | 4 | 3 |

| (3, 2) | 5 | 3 |

| (3, 3) | 6 | 3 |

| (3, 4) | 7 | 4 |

| (4, 1) | 5 | 4 |

| (4, 2) | 6 | 4 |

| (4, 3) | 7 | 4 |

| (4, 4) | 8 | 4 |

When dealing with probabilities, it is common to write \(X=3\) instead of16 \(\{X=3\}\), and \(X = 4, Y = 3\) instead of \(\{X = 4\}\cap \{Y = 3\}\); read the comma in \(X = 4, Y = 3\) as “and”. But keep in mind that an expression like “\(X=3\)” really represents an event \(\{X=3\}\), a subset of outcomes of the sample space.

2.3.6 Outcomes, events, and random variables

Outcomes, events, and random variables are some of the main objects of probability. While they are related, these are distinct objects. Thinking in terms of a spreadsheet, an outcome is a row, an event is a subset of rows, and a random variable is a column. Mathematically, an outcome is a point, an event is a set, and a random variable is a function which outputs a number. As such, different operations are valid depending on what you’re dealing with. Don’t confuse operations like \(\cap\) that operate on sets (events, “and”) with operations like \(+\) that operate on numbers and functions (random variables, “plus” meaning addition).

2.3.7 Exercises

Exercise 2.5 Consider the outcome of a sequence of 4 flips of a coin. One random variable is \(X\), the number of heads flipped.

- Explain why \(X\) is a random variable.

- Evaluate each of the following: \(X(HHHH), X(HTHT), X(TTHH)\).

- Identify the possible values of \(X\). Why not let the sample space just consist of this set of possible values?

- What does \(4-X\) represent?

- What does \(X/4\) represent?

Exercise 2.6 The latest series of collectible Lego Minifigures contains 3 different Minifigure prizes (labeled 1, 2, 3). Each package contains a single unknown prize. Suppose we only buy 3 packages and we consider as our sample space outcome the results of just these 3 packages (prize in package 1, prize in package 2, prize in package 3). For example, 323 (or (3, 2, 3)) represents prize 3 in the first package, prize 2 in the second package, prize 3 in the third package. Let \(X\) be the number of distinct prizes obtained in these 3 packages. Let \(Y\) be the number of these 3 packages that contain prize 1.

The sample space consists of 27 outcomes, listed in the table below.

| 111 | 112 | 113 | 121 | 122 | 123 | 131 | 132 | 133 | |

| \(X\) | |||||||||

| \(Y\) | |||||||||

| 211 | 212 | 213 | 221 | 222 | 223 | 231 | 232 | 233 | |

| \(X\) | |||||||||

| \(Y\) | |||||||||

| 311 | 312 | 313 | 321 | 322 | 323 | 331 | 332 | 333 | |

| \(X\) | |||||||||

| \(Y\) |

- Use the table above and evaluate \(X\) and \(Y\) for each of the outcomes.

- Identify the possible values of \(X\).

- Identify the possible values of \(Y\).

- Identify the possible \((X, Y)\) pairs.

- Identify and interpret \(\{X = 1\}\).

- Identify and interpret \(\{X = 2\}\).

- Identify and interpret \(\{X = 3\}\).

- Identify and interpret \(\{Y = 0\}\).

- Identify and interpret \(\{Y = 1\}\).

- Identify and interpret \(\{Y = 2\}\).

- Identify and interpret \(\{Y = 3\}\).

- Identify and interpret \(\{X = 2, Y = 1\}\).

- Identify and interpret \(\{X = Y\}\).

- Let \(I_1\) be the indicator random variable that prize 1 is obtained (in at least one of the three packages). Identify and intepret \(\{I_1 = 0\}\).

- Let \(I_2\) be the indicator random variable that prize 2 is obtained (in at least one of the three packages), and similarly \(I_3\) for prize 3. What is the relationship between \(X\) and \(I_1, I_2, I_3\)?

- How can you write \(Y\) in terms of indicator random variables?

Exercise 2.7 Katniss throws a dart at a circular dartboard with radius 1 foot. (Assume that Katniss’s dart never misses the dartboard.) Let \(X\) be the distance (inches) from the location of the dart to the center of the dartboard.

- Identify (with a picture) and interpret \(\{X \le 1\}\)

- Identify (with a picture) and interpret \(\{1 < X < 2\}\)

- Identify (with a picture) and interpret \(\{X > 11\}\)

- Identify (with a picture) and interpret \(\{X = 0\}\)

- Identify (with a picture) and interpret \(\{X = 1\}\)

2.4 Probability spaces

In this chapter we have defined outcomes, events, and random variables, the main mathematical objects associated with a random phenomenon. But we haven’t actually computed any probabilities yet! So far we have only been concerned with what is possible. You might have noticed that the examples often did not include any assumptions like the “die is fair”, “each object is equally likely to be put in any spot”, or “Regina is more likely to arrive late and Cady is more likely to arrive early”. Now we will start to incorporate assumptions of the random phenomenon to determine how probable various events are.

2.4.1 Probability measures

As we saw in Section 1.3, there are some basic logical consistency requirements that probabilities must satisfy, which are formalized in three “axioms”.

Definition 2.6 A probability measure, typically denoted \(\textrm{P}\), assigns probabilities to events to quantify their relative likelihoods, plausibilities, or degrees of uncertainty according to the assumptions of the model of the random phenomenon. The probability of event17 \(A\) is denoted \(\textrm{P}(A)\).

Any valid probability measure must satisfy the following axioms.

- For any event \(A\), \(0 \le \textrm{P}(A) \le 1\).

- If \(\Omega\) represents the sample space then \(\textrm{P}(\Omega) = 1\).

- Countable additivity. If \(A_1, A_2, A_3, \ldots\) are disjoint events (recall Definition 2.3), then \[ \textrm{P}(A_1 \cup A_2 \cup A_2 \cup \cdots) = \textrm{P}(A_1) + \textrm{P}(A_2) +\textrm{P}(A_3) + \cdots \]

An event \(A\) is something that can happen or can be true; \(\textrm{P}(A)\) quantifies how likely it is that \(A\) will happen or how plausible it is that \(A\) is true. Probabilities are always defined for events (sets) but remember than many events are defined in terms of random variables. For example, if \(X\) is tomorrow’s high temperature (degrees F) we might be interested in \(\textrm{P}(\{X>80\})\), the probability of the event that tomorrow’s high temperature is above 80 degrees F. If \(Y\) is the amount of rainfall tomorrow (inches) we might be interested in \(\textrm{P}(\{X > 80\}\cap \{Y < 2\})\), the probability of the event that tomorrow’s high temperature is above 80 degrees F and the amount of rainfall is less than 2 inches. To simplify notation, it is common to write \(\textrm{P}(X>80)\) instead of \(\textrm{P}(\{X>80\})\), or \(\textrm{P}(X > 80, Y < 2)\) instead of \(\textrm{P}(\{X > 80\}\cap \{Y < 2\})\). Read the comma in \(\textrm{P}(X > 80, Y < 2)\) as “and”. But keep in mind that an expression like “\(X>80\)” really represents an event \(\{X>80\}\).

The three axioms require that probabilities of different events must fit together in a logically coherent way.

The requirement \(0\le \textrm{P}(A)\le 1\) makes sense in light of the relative frequency interpretation: an event \(A\) can not occur on more than 100% of repetitions or less than 0% of repetitions of the random phenomenon.

The requirement that \(\textrm{P}(\Omega)=1\) just ensures that the sample space accounts for all of the possible outcomes. Basically, \(\textrm{P}(\Omega)=1\) says that on any repetition of the random phenomenon, “something has to happen”. Roughly, \(\textrm{P}(\Omega)=1\) implies that all outcomes taken together need to account for 100% of the probability. If \(\textrm{P}(\Omega)\) were less than 1, then the sample space hasn’t accounted for all of the possible outcomes.

Event \(A_1 \cup A_2 \cup \cdots\) is the event that \(A_1\) occurs OR \(A_2\) occurs OR… In other words, \(A_1 \cup A_2 \cup \cdots\) is the event that at least one of the \(A_i\)’s occur. Countable additivity says that as long as events share no outcomes in common, then the probability that at least one of the events occurs is equal to the sum of the probabilities of the individual events. In Example 1.7, the events \(B\)=“the Braves win the 2023 World Series” and \(A\)=“the Rays win the 2023 World Series” are disjoint, \(A\cap B = \emptyset\); in a single World Series, both teams cannot win. If \(\textrm{P}(B) = 0.19\) and \(\textrm{P}(A) = 0.16\), then the probability of \(A\cup B\), the event that either the Rays or the Braves win, must be \(\textrm{P}(A\cup B)=0.29\).

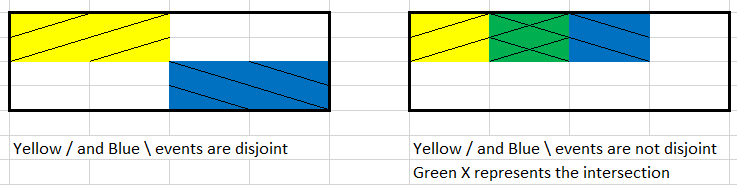

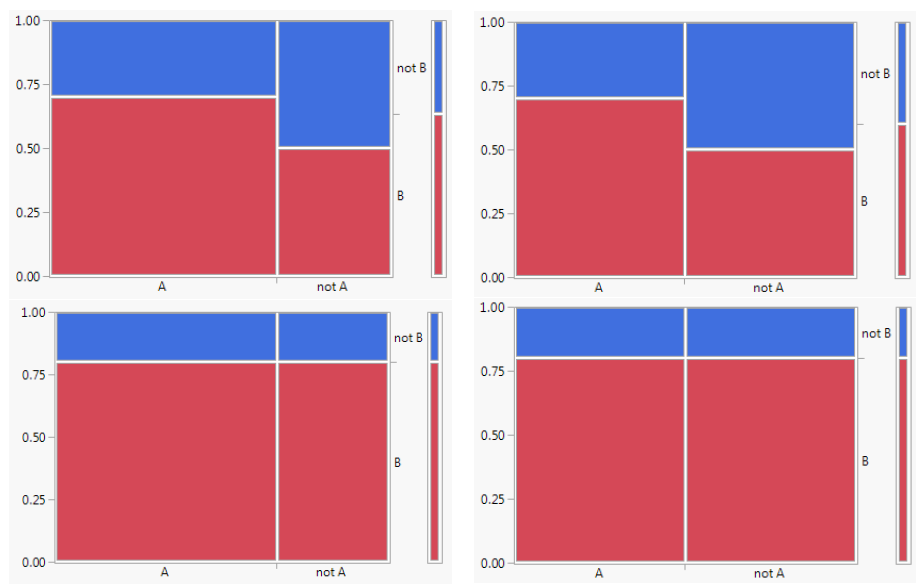

Countable additivity can be understood through a diagram with areas representing probabilities, as in the figure below which represents two events (yellow / and blue \). On the left, there is no “overlap” between areas so the total area is the sum of the two pieces; this depicts countable additivity for two disjoint events. On the right, there is overlap between the two areas, so simply adding the two areas “double counts” the intersection (green \(\times\)) and does not result in the correct total area. Countable additivity applies to any countable number18 of events, as long as there is no “overlap”.

The three axioms of a probability measure are simply minimal logical consistency requirements that must be satisfied by any probability model to ensure that probabilities fit together in a coherent way. There are also many physical aspects of the random phenomenon or assumptions (e.g. “fairness”, independence, conditional relationships) that must be considered when determining a reasonable probability measure for a particular situation. Sometimes \(\textrm{P}(A)\) is defined explicitly for an event \(A\) via a formula. But it is much more common for a probability measure to be defined only implicitly through modeling assumptions; probabilities of events then follow from the axioms and related properties.

2.4.2 Some probability measures for a four-sided die

Consider a single roll of a four-sided die. The sample space consists of four possible outcomes, \(\Omega = \{1, 2, 3, 4\}\). Events concern what might happen on a single roll. For example, if \(A\) is the event that we roll an odd number then \(A = \{1, 3\}\); “roll an odd number” occurs if we roll a 1 so 1 is an \(A\), or “roll an odd number” occurs if we roll a 3 so 3 is in \(A\). Table 2.6 lists the collection of all events.

| Event | Description | Probability of event assuming a fair die |

|---|---|---|

| \(\emptyset\) | Roll nothing (not possible) | 0 |

| \(\{1\}\) | Roll a 1 | 1/4 |

| \(\{2\}\) | Roll a 2 | 1/4 |

| \(\{3\}\) | Roll a 3 | 1/4 |

| \(\{4\}\) | Roll a 4 | 1/4 |

| \(\{1, 2\}\) | Roll a 1 or a 2 | 2/4 |

| \(\{1, 3\}\) | Roll a 1 or a 3 | 2/4 |

| \(\{1, 4\}\) | Roll a 1 or a 4 | 2/4 |

| \(\{2, 3\}\) | Roll a 2 or a 3 | 2/4 |

| \(\{2, 4\}\) | Roll a 2 or a 4 | 2/4 |

| \(\{3, 4\}\) | Roll a 3 or a 4 | 2/4 |

| \(\{1, 2, 3\}\) | Roll a 1, 2, or 3 (a.k.a. do not roll a 4) | 3/4 |

| \(\{1, 2, 4\}\) | Roll a 1, 2, or 4 (a.k.a. do not roll a 3) | 3/4 |

| \(\{1, 3, 4\}\) | Roll a 1, 3, or 4 (a.k.a. do not roll a 2) | 3/4 |

| \(\{2, 3, 4\}\) | Roll a 2, 3, or 4 (a.k.a. do not roll a 1) | 3/4 |

| \(\{1, 2, 3, 4\}\) | Roll something | 1 |

When outcomes are equally likely, we find the probability of an event by counting the number of outcomes that satisfy the event.

The probability measure \(\textrm{P}\) in Example 2.28 satisfies all the axioms and so it is a valid probability measure. However, assuming that the outcomes are equally likely is a much stricter condition than the basic logical consistency requirements of the axioms. There are many other possible probability measures, like in the following.

| Event | Description | Probability of event assuming a particular weighted die |

|---|---|---|

| \(\emptyset\) | Roll nothing (not possible) | 0 |

| \(\{1\}\) | Roll a 1 | 0.1 |

| \(\{2\}\) | Roll a 2 | 0.2 |

| \(\{3\}\) | Roll a 3 | 0.3 |

| \(\{4\}\) | Roll a 4 | 0.4 |

| \(\{1, 2\}\) | Roll a 1 or a 2 | 0.3 |

| \(\{1, 3\}\) | Roll a 1 or a 3 | 0.4 |

| \(\{1, 4\}\) | Roll a 1 or a 4 | 0.5 |

| \(\{2, 3\}\) | Roll a 2 or a 3 | 0.5 |

| \(\{2, 4\}\) | Roll a 2 or a 4 | 0.6 |

| \(\{3, 4\}\) | Roll a 3 or a 4 | 0.7 |

| \(\{1, 2, 3\}\) | Roll a 1, 2, or 3 (a.k.a. do not roll a 4) | 0.6 |

| \(\{1, 2, 4\}\) | Roll a 1, 2, or 4 (a.k.a. do not roll a 3) | 0.7 |

| \(\{1, 3, 4\}\) | Roll a 1, 3, or 4 (a.k.a. do not roll a 2) | 0.8 |

| \(\{2, 3, 4\}\) | Roll a 2, 3, or 4 (a.k.a. do not roll a 1) | 0.9 |

| \(\{1, 2, 3, 4\}\) | Roll something | 1 |

The symbol \(\textrm{P}\) is more than just shorthand for the word “probability”. \(\textrm{P}\) denotes the underlying probability measure, which represents all the assumptions about the random phenomenon. Changing assumptions results in a change of the probability measure and a different probability model. We often consider several probability measures for the same sample space and collection of events; these several measures represent different sets of assumptions or available information and different probability models.

The probability measure \(\textrm{P}\) in Example 2.28 corresponds to the assumption of a fair die (equally likely outcomes). With this measure \(\textrm{P}(A) = 2/4=0.5\) for \(A = \{1, 3\}\). But under the probability measure \(\textrm{Q}\) corresponding to the weighted die in Example 2.29, \(\textrm{Q}(A) = 0.4\). The outcomes and events are the same in both scenarios, because both scenarios involve a four sided-die. What is different is the probability measure that assigns probabilities to the events. One scenario assumes the die is fair while the other assumes the die has a particular weighting, resulting in two different probability measures.

Both probability measures \(\textrm{P}\) and \(\textrm{Q}\) can be written as explicit set functions: for an event \(A\)

\[\begin{align*} \textrm{P}(A) & = \frac{\text{number of outcomes that satisfy $A$}}{4}, & & {\text{a fair four-sided die}} \\ \textrm{Q}(A) & = \frac{\text{sum of elements in $A$}}{10}, & & {\text{a specific weighted four-sided die}} \end{align*}\]

We provide the above descriptions to illustrate that a probability measure operates on sets. However, in many situations there does not exist a simple closed form expression for the set function defining the probability measure which maps events to probabilities.

| Event | Description | Probability of event assuming a particular weighted die |

|---|---|---|

| \(\emptyset\) | Roll nothing (not possible) | 0 |

| \(\{1\}\) | Roll a 1 | 4/15 |

| \(\{2\}\) | Roll a 2 | 6/15 |

| \(\{3\}\) | Roll a 3 | 3/15 |

| \(\{4\}\) | Roll a 4 | 2/15 |

| \(\{1, 2\}\) | Roll a 1 or a 2 | 10/15 |

| \(\{1, 3\}\) | Roll a 1 or a 3 | 7/15 |

| \(\{1, 4\}\) | Roll a 1 or a 4 | 6/15 |

| \(\{2, 3\}\) | Roll a 2 or a 3 | 9/15 |

| \(\{2, 4\}\) | Roll a 2 or a 4 | 8/15 |

| \(\{3, 4\}\) | Roll a 3 or a 4 | 5/15 |

| \(\{1, 2, 3\}\) | Roll a 1, 2, or 3 (a.k.a. do not roll a 4) | 13/15 |

| \(\{1, 2, 4\}\) | Roll a 1, 2, or 4 (a.k.a. do not roll a 3) | 12/15 |

| \(\{1, 3, 4\}\) | Roll a 1, 3, or 4 (a.k.a. do not roll a 2) | 9/15 |

| \(\{2, 3, 4\}\) | Roll a 2, 3, or 4 (a.k.a. do not roll a 1) | 11/15 |

| \(\{1, 2, 3, 4\}\) | Roll something | 1 |

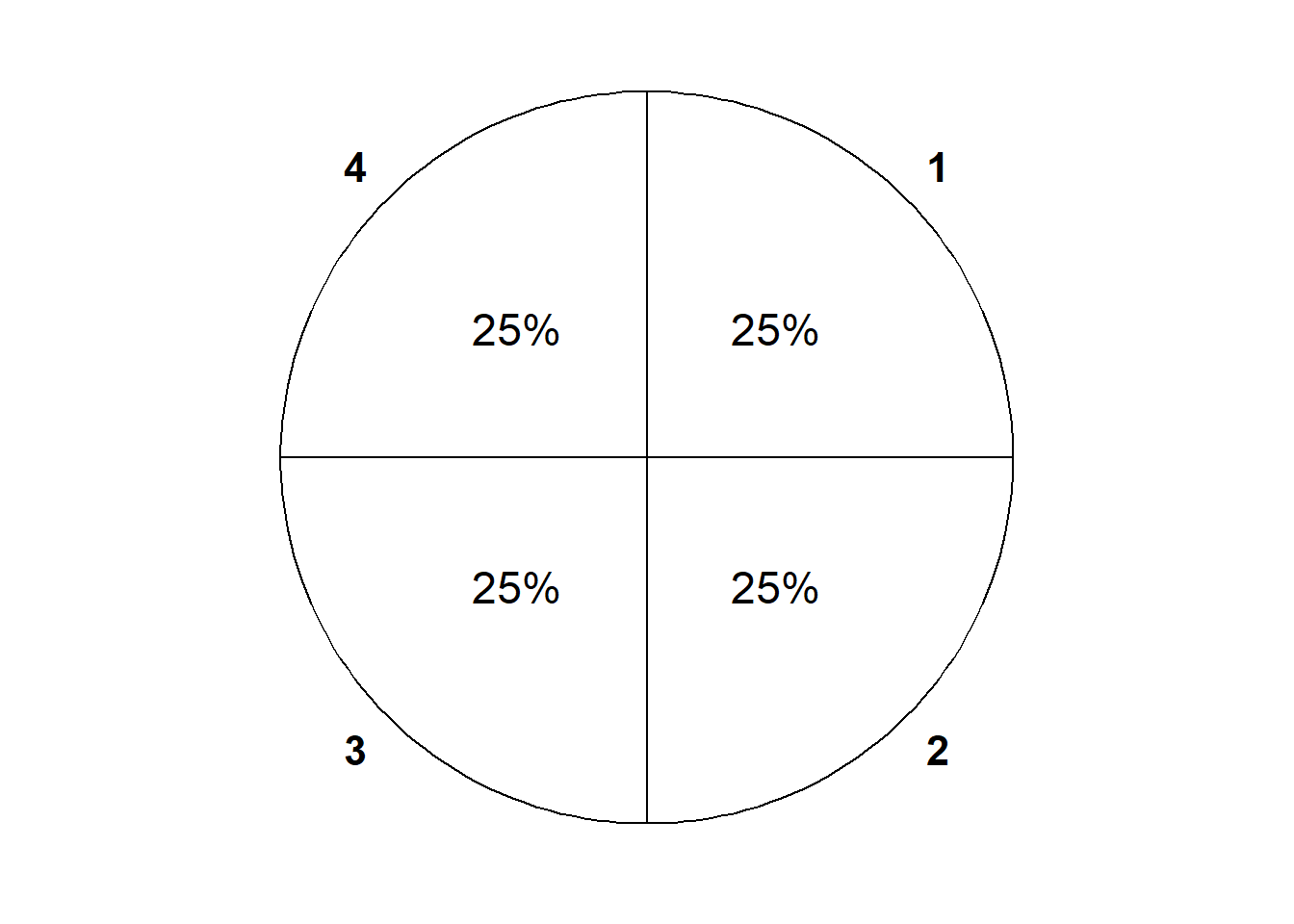

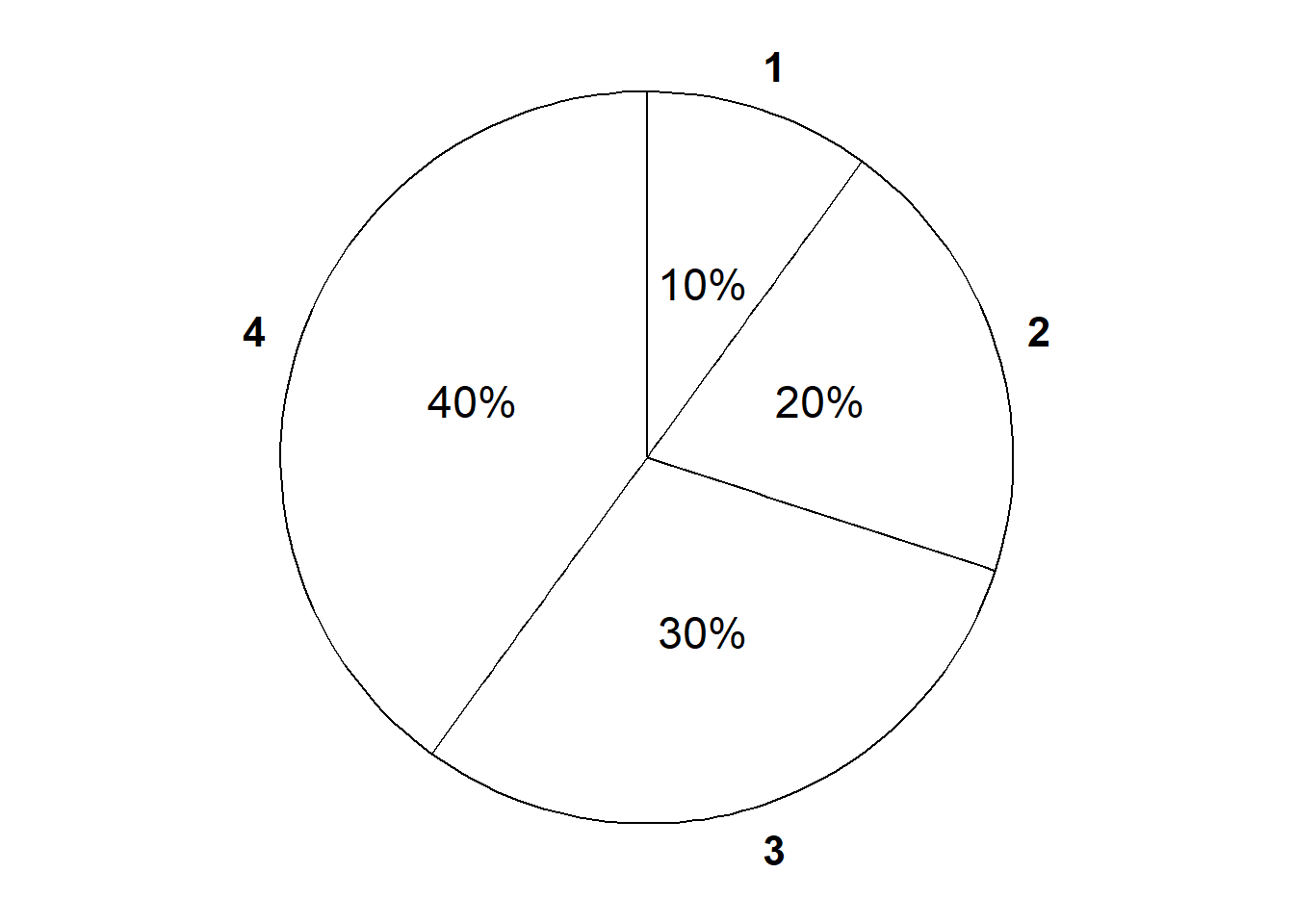

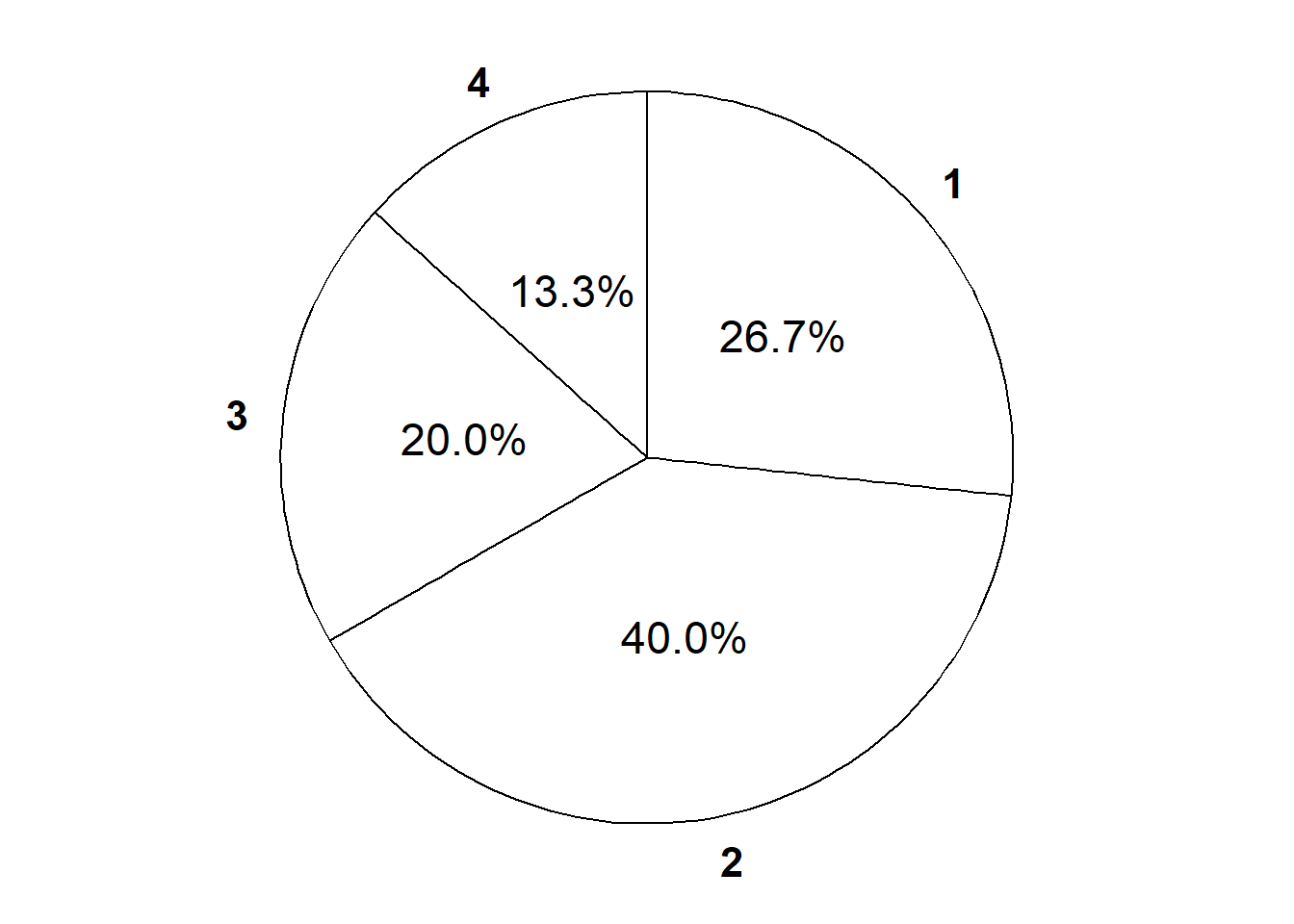

The die rolling example is not the most exciting or practical scenario. But the example does illustrate the idea of several probability measures, each corresponding to a different set of assumptions about the random phenomenon. If it’s difficult to imagine how to physically weight a die in these particular ways, consider the spinners (like from a kids game) in Figure 2.9).

It is usually reasonable to assume that dice are fair, but most real world situations are not as simple as rolling dice. Just because a situation has 16 possible outcomes doesn’t mean the outcomes have to be equally likely. For example, there might be 12 contestants on your favorite reality competition show, but that doesn’t mean that all of the 12 contestants are equally likely to win the season.

2.4.3 Some probability measures in the meeting problem

Recall the meeting problem. The general problem involves multiple people, but we’ll first consider the arrival time of just a single person, who we’ll call Han22.

Suppose that Han’s arrival time will definitely be between noon and 1:00, so that the sample space—with time measured in minutes after noon, including fractions of a minute—is \(\Omega = [0, 60]\).

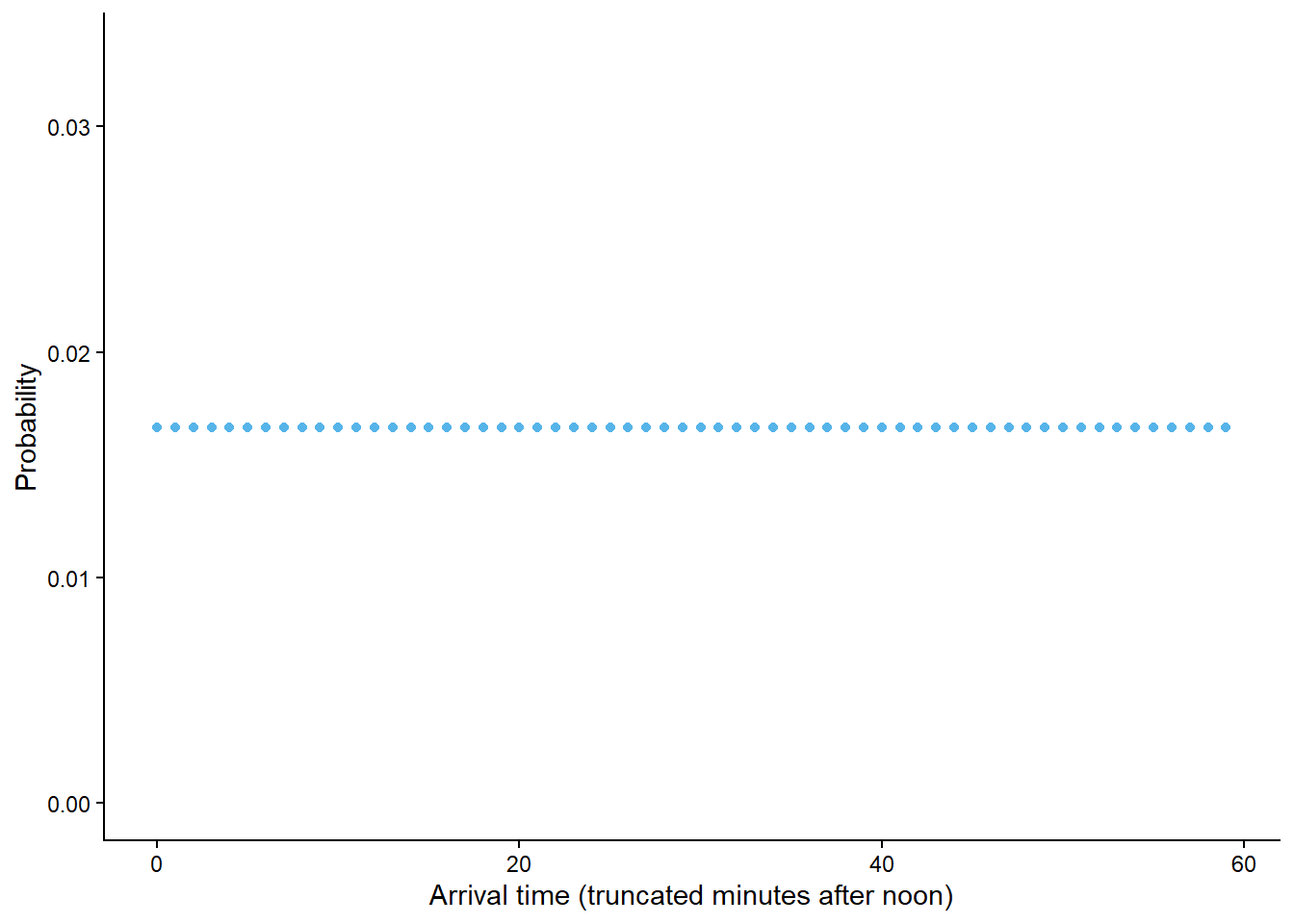

Example 2.28 illustrated that for a finite sample space with equally likely outcomes, computing the probability of an event reduces to counting the number of outcomes that satisfy the event and dividing by the total number of possible outcomes. The continuous analog of equally likely outcomes is a uniform probability measure. When the sample space is uncountable, size is measured continuously (length, area, volume) rather that discretely (counting).

\[ \textrm{P}(A) = \frac{\text{size of } A}{\text{size of } \Omega} \qquad \text{if $\textrm{P}$ is a uniform probability measure} \]

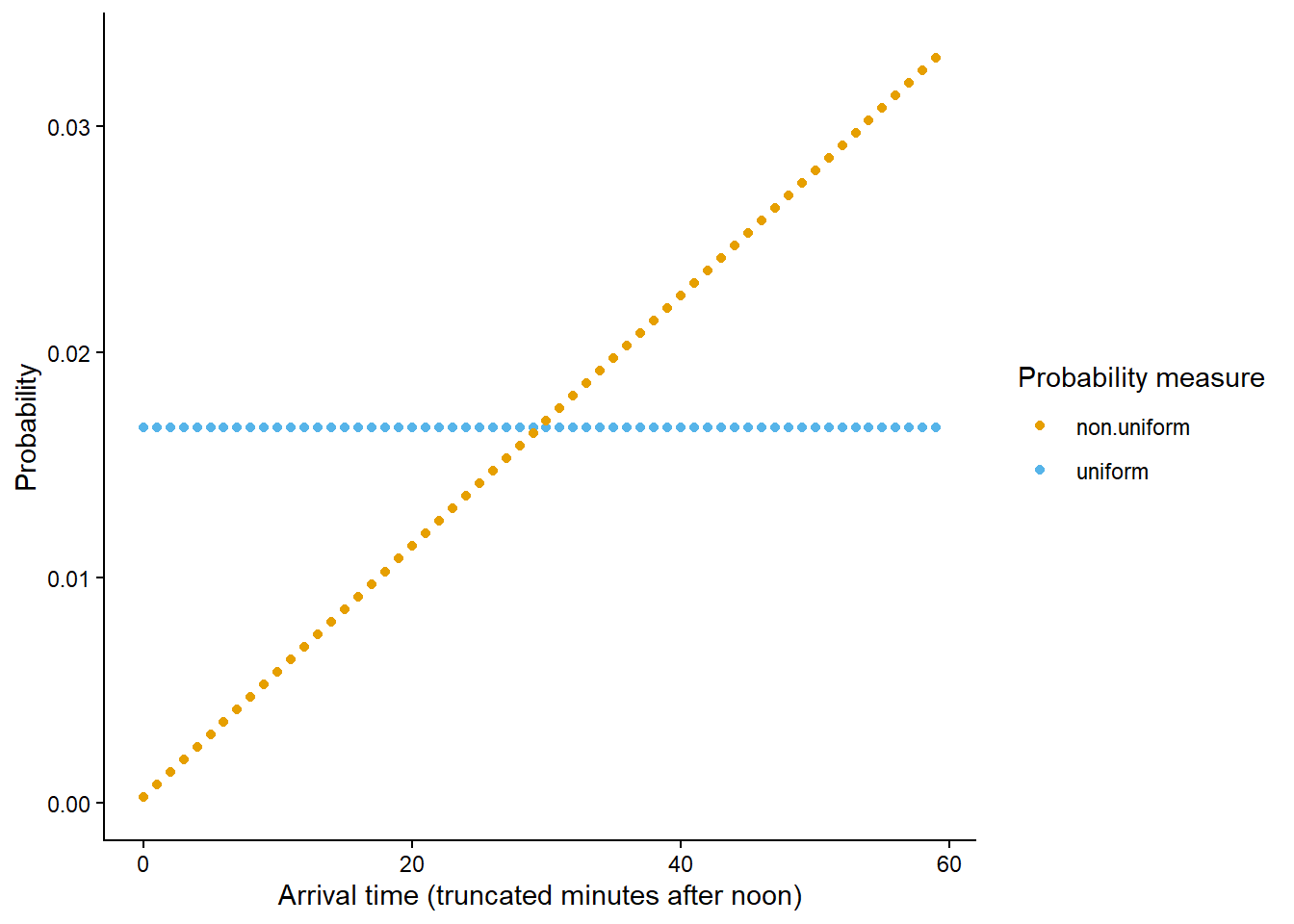

The uniform probability measure in Example 2.32 is just one probability measure for Han’s arrival, reflecting an assumption that Han is “equally likely” to arrive at any time between noon and 1:00. Now we’ll model Han’s arrival time with a non-uniform probability measure which reflects that he is more likely to arrive near certain times than others.

The probability measure in Example 2.33 is a non-uniform measure. Han is much more likely to arrive between 12:45 and 1:00 than between 12:00 and 12:15, even though both these intervals have the same length.

The last part in Example 2.34 might seem counterintuitive at first. There was nothing special about 12:00; pick any precise time in the continuous interval from noon to 1:00, and the probability that Han arrives at that exact time, with infinite precision, is 0. This idea can be understood as a limit. The probability that Han arrives within one minute of the specified time is small, within one second of the specified time is even smaller, within one millisecond of the specified time is even smaller still; with infinite precision these time increments can get smaller and smaller indefinitely. Of course, infinite precision is not practical, but assuming the possible arrival times are represented by a continuous interval provides a reasonable mathematical model. Even though any particular time has probability 0 of being the precise arrival time, intervals of time still have positive probability of containing the arrival time. When we ask a question like “what is the probability that Han arrives at noon”, “at noon” really means “within 1 minute of noon” or “within 1 second of noon” or within whatever degree of precision is good enough for our practical purposes, and such intervals have non-zero probability.

Continuous sample spaces introduce some complications that we didn’t encounter when dealing with discrete sample spaces. For a continuous sample space, the probability of any particular outcome24 is 0. However, Example 2.35 illustrates that in some sense certain outcomes can be more likely than others; Han is more likely to arrive close to 1:00 than close to noon. For continuous sample spaces it makes more sense to consider “close to” probabilities rather than “equals to” probabilities. We will investigate related ideas in much more detail as we go.

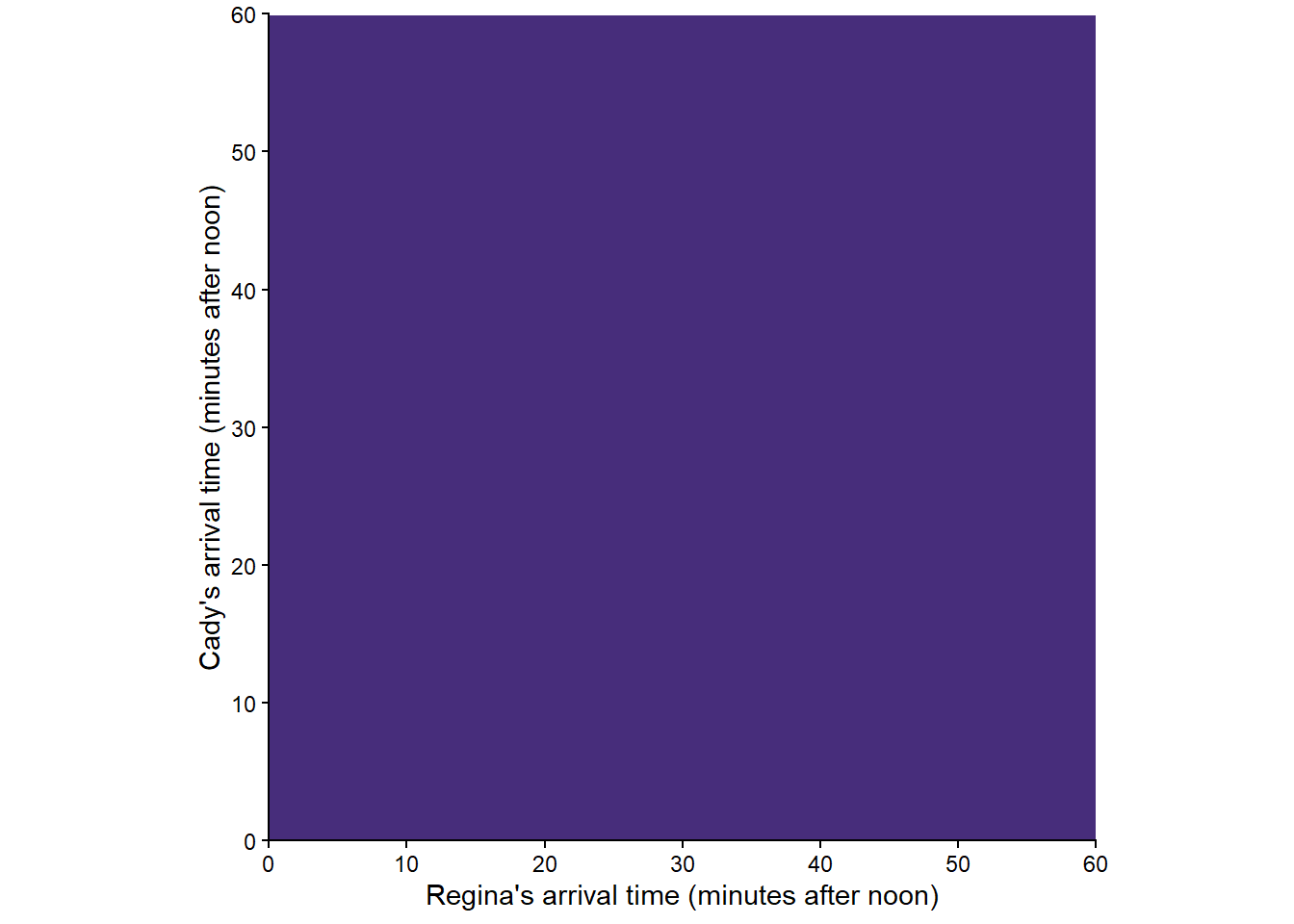

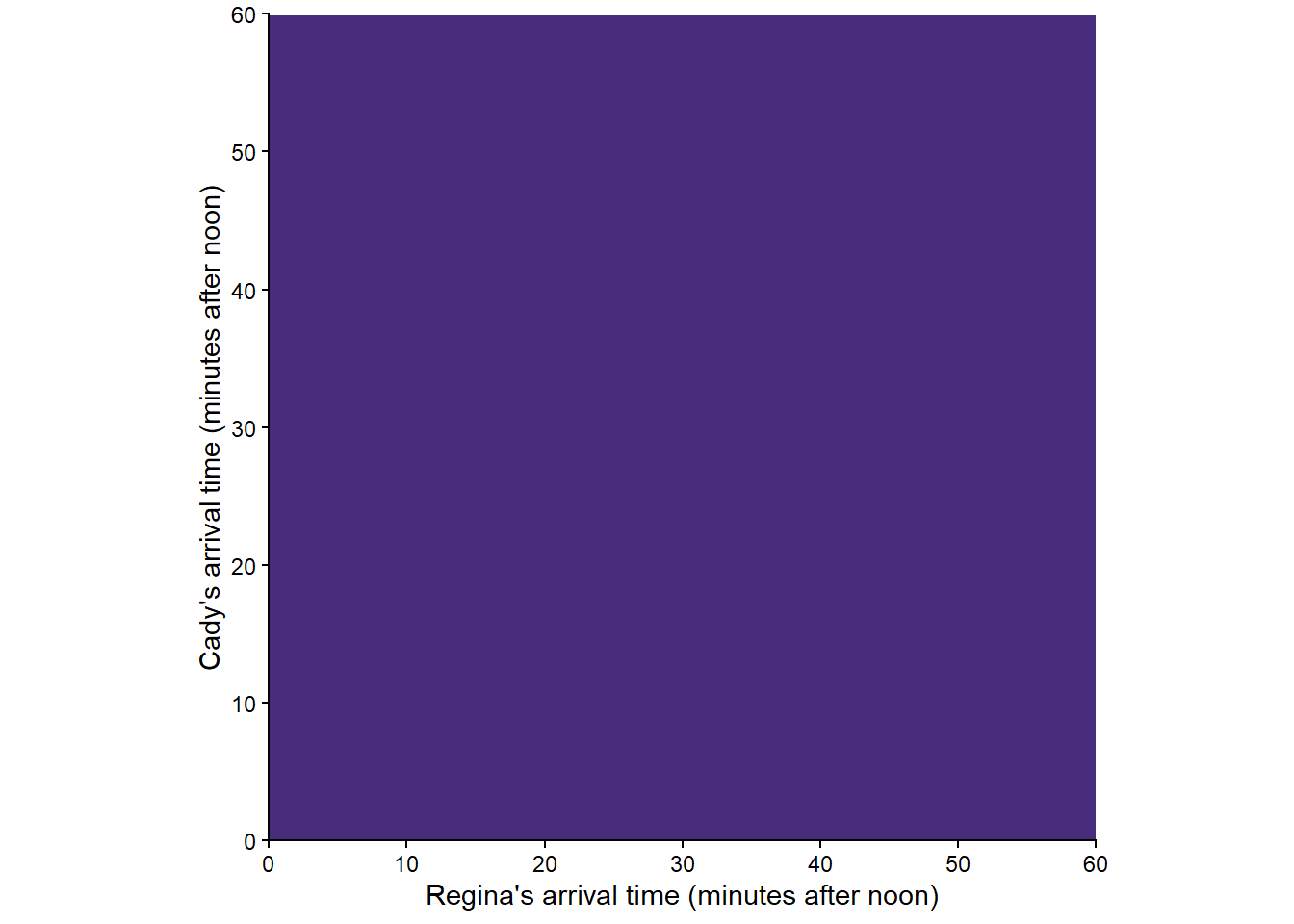

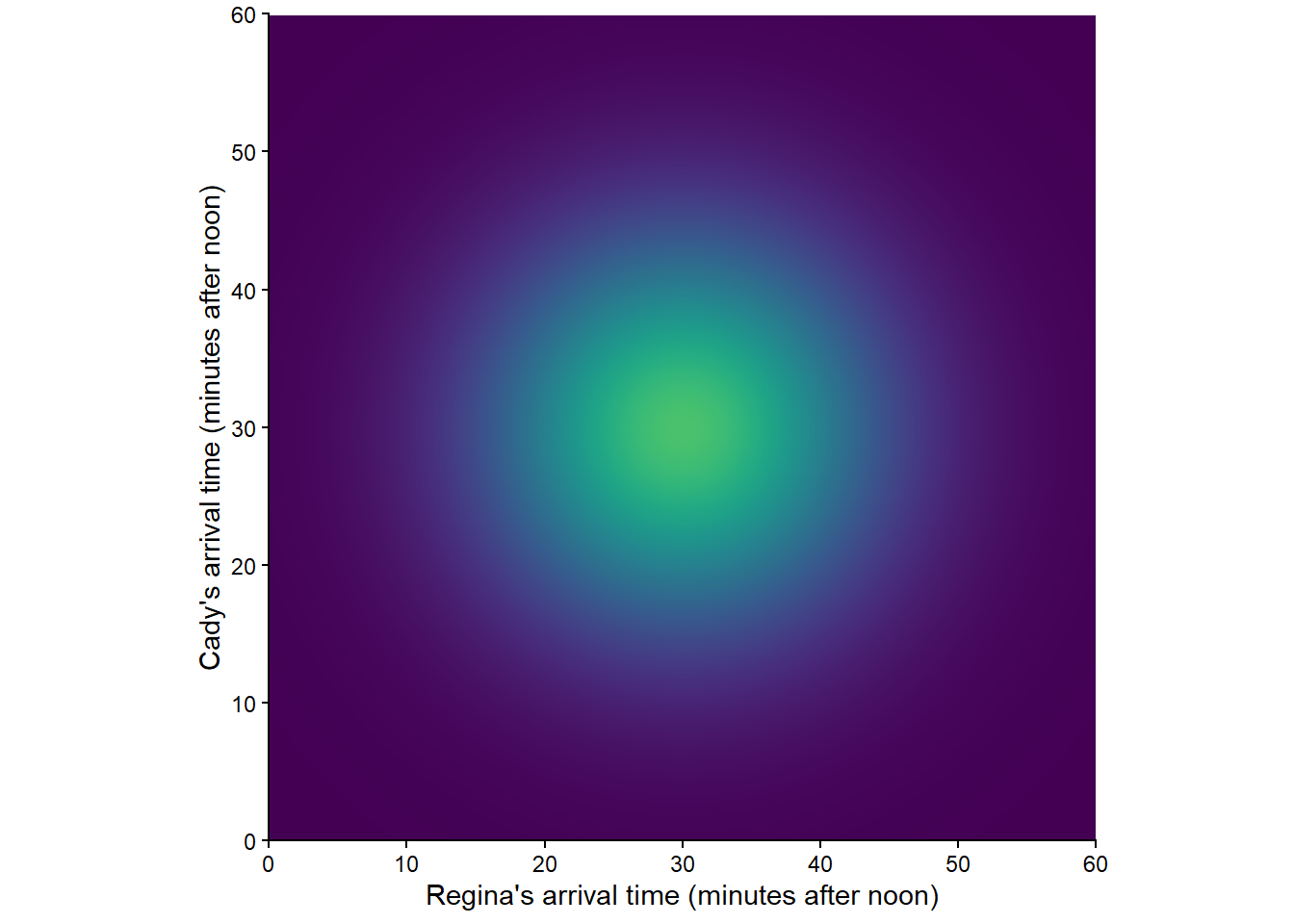

Now we’ll return to the two-person (Regina, Cady) meeting problem from Example 2.3, with sample space depicted in Figure 2.2. We will use pictures to represent a few probability measures corresponding to different assumptions about the arrival times. In the pictures below, lighter colors represent regions of outcomes that are more likely; darker colors, less likely.

Figure 2.12 corresponds to a uniform probability measure under which all outcomes are “equally likely”. This probability measure would be appropriate if we assume that Regina and Cady each arrive at a time uniformly at random between noon and 1, independently of each other.

Example 2.37 and Example 2.34 illustrate similar ideas. Regardless of the precise time in the continuous interval \([0, 60]\) at which Regina arrives, the probability that Cady arrives at that exact time, with infinite precision, is 0. In practice, if we’re interested in “the probability that Regina at Cady arrive at the same time”, we really mean “close enough to the same time”, where “close enough” could be within one minute or one second or whatever degree of precision is good enough for practical purposes.

Most random phenomenon do not involve equally likely outcomes or uniform probability measures. Even when the underlying outcomes are equally likely, the values of related random variables are usually not. Therefore, most interesting probability problems involve “non-uniform” probability measures.

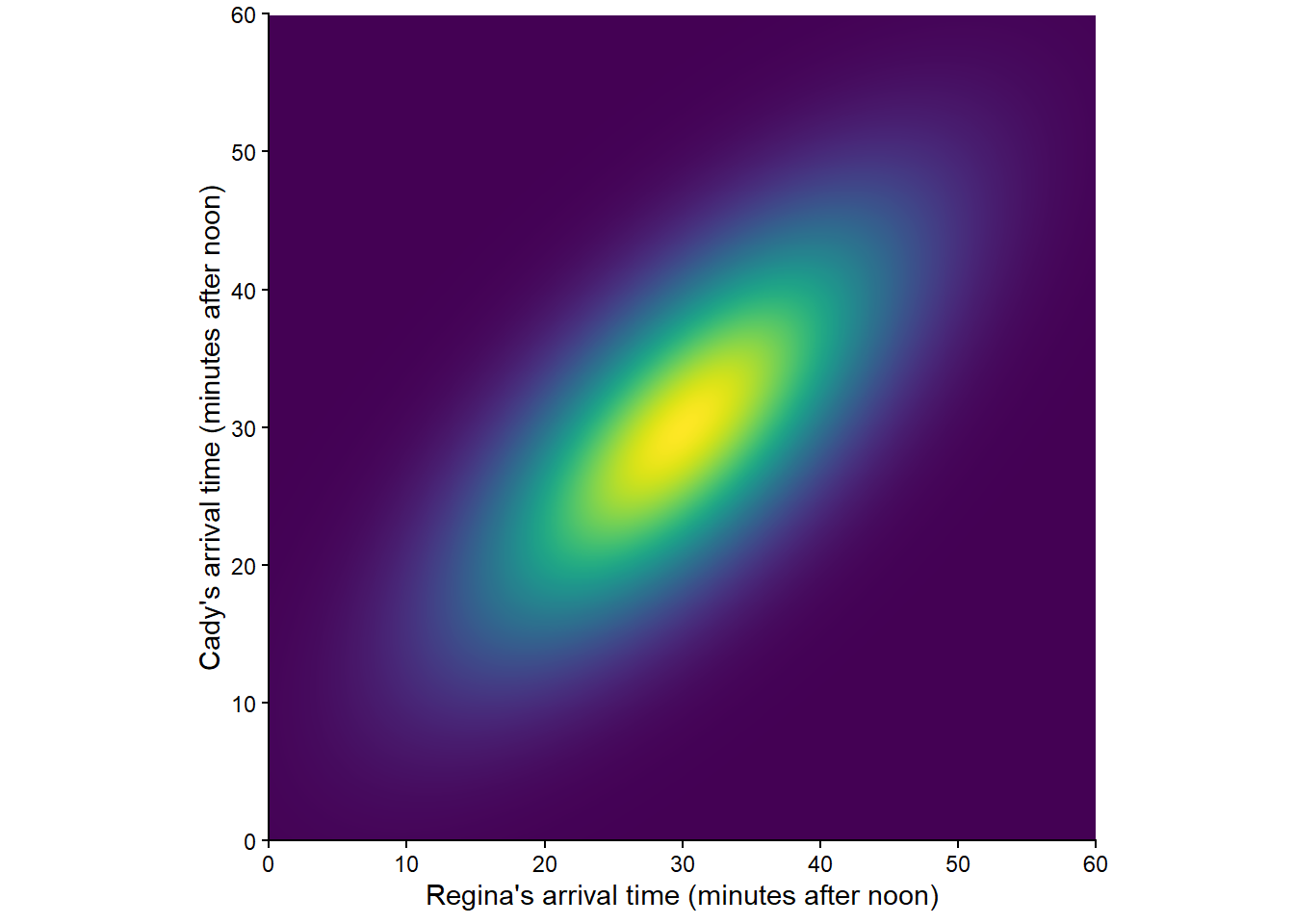

Figure 2.14 corresponds to one non-uniform probability measure for the two-person meeting problem; certain outcomes are more likely than others. (Lighter colors represent regions of outcomes that are more likely; darker colors, less likely.) Such a probability measure would be appropriate if we assume that Regina and Cady each are more likely to arrive around 12:30 than noon or 1:00, independently of each other. Switching from the uniform probability measure represented by Figure 2.12 to the non-uniform one represented by Figure 2.14 would change the probability of the events in Example 2.36 and Example 2.37. (We’ll see how to compute probabilities for non-uniform measures later.)

Figure 2.15 corresponds to another “non-uniform” probability measure. Such a probability measure would be appropriate if we assume that Regina and Cady each are more likely to arrive around 12:30 than noon or 1:00, but they coordinate their arrivals so they are more likely to arrive around the same time.

There are many other probability measures for the meeting problem, representing different sets of assumptions. Each probability measure assigns a probability to events like “Cady arrives first”, “both arrive before 12:20”, and “the first person to arrive has to wait less than 15 minutes for the second to arrive”, and these probabilities can differ between models.

2.4.4 Some properties of probability measures

Many other properties follow from the axioms, some of which we state below. Don’t let notation or names like the “complement rule” confuse you. We have already successfully used all of the properties below intuitively when working with two-way tables. All that is new in this section is mathematical formalism. Yes, getting comfortable with proper notation is part of learning the language of probability. But don’t let formality get in the way of your intuition. Continue to use the ideas from Chapter 1, including tools like two-way tables.

The main “meat” of the axioms is countable additivity. Thus, the key to many proofs of probability properties is to express relevant events in terms of unions of disjoint events. (Proof are included in the footnotes.)

Lemma 2.2 (Complement rule) For any event25 \(A\), \(\textrm{P}(A^c) = 1 - \textrm{P}(A)\).

The complement rule follows from the fact that an event either happens or it doesn’t. We’ll see that it is sometimes more convenient to compute directly the probability that an event does not happen and then use the complement rule.

Lemma 2.3 (Subset rule) If \(A \subseteq B\) then26 \(\textrm{P}(A) \le \textrm{P}(B)\).

The subset rule says that if every outcome that satisfies event \(A\) also satisfies event \(B\) then the probability of event \(B\) must be at least as large as the probability of event \(A\). We saw an application of the subset rule in Example 1.9.

Lemma 2.4 (Addition rule for two events) If \(A\) and \(B\) are any two events then27

\[\begin{align*} \textrm{P}(A\cup B) = \textrm{P}(A) + \textrm{P}(B) - \textrm{P}(A \cap B) \end{align*}\]

The addition rule for more than two events is complicated28 (unless the events are disjoint). For example, the addition rule for three events is \[\begin{align*} \textrm{P}(A\cup B\cup C) & = \textrm{P}(A) + \textrm{P}(B) + \textrm{P}(C)\\ & \qquad - \textrm{P}(A\cap B) - \textrm{P}(A \cap C) - \textrm{P}(B \cap C)\\ & \qquad + \textrm{P}(A \cap B \cap C). \end{align*}\]

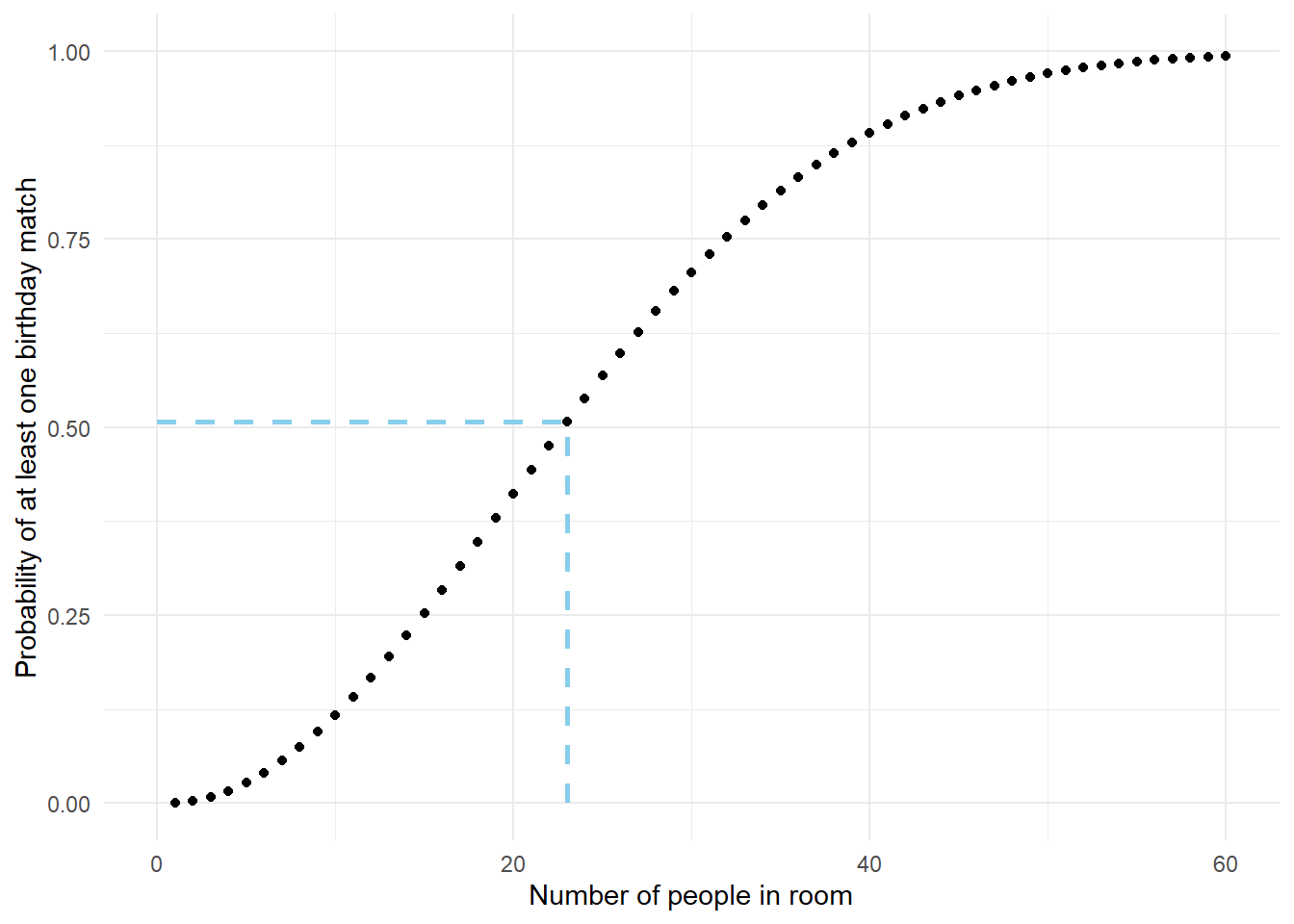

Many problems involve finding the “probability of at least one…” On the surface such problems involve unions (“at least one of events \(A_1, A_2, \ldots\) occur if event \(A_1\) occurs OR event \(A_2\) occurs OR…”) Since the general addition rule for multiple events is complicated, unless the events are disjoint it is usually more convenient to use the complement rule and compute “the probability of at least one…” as one minus “the probability of none…” The “probability of none…” involves intersections (“none of the events \(A_1, A_2, \ldots\) occur if event \(A_1\) does not occur AND event \(A_2\) does not occur AND…”). We will see more about probabilities of intersections later.

Lemma 2.5 (Law of total probability) If \(C_1, C_2, C_3\ldots\) are disjoint events with \(C_1\cup C_2 \cup C_3\cup \cdots =\Omega\), then29

\[\begin{align*} \textrm{P}(A) & = \textrm{P}(A \cap C_1) + \textrm{P}(A \cap C_2) + \textrm{P}(A \cap C_3) + \cdots \end{align*}\]

Since \(C\) and \(C^c\) are disjoint with \(C \cup C^c = \Omega\), a special case is

\[\begin{align*} \textrm{P}(A) & = \textrm{P}(A \cap C) + \textrm{P}(A \cap C^c) \end{align*}\]

In the law of total probability the events \(C_1, C_2, C_3, \ldots\), which represent “cases”, form a partition of the sample space; each outcome in the sample space satisfies exactly one of the cases \(C_i\). The law of total probability says that we can compute the “overall” probability \(\textrm{P}(A)\) by breaking \(A\) down into pieces and then summing the case-by-case probabilities \(\textrm{P}(A\cap C_i)\). We use the law of total probability intuitively when we sum across rows and columns in two-way tables. (Later we will see a different and more useful expression of the law of total probability, involving conditional probabilities.)

The following example is one we have basically covered before, Example 1.15, but now we use mathematical notation and properties. However, the ideas are the same as we discussed in Example 1.15.

The following example involves randomly selecting a U.S. household. Note that while “randomly select” is commonly used terminology, it is not the best wording. Remember that “random” simply means uncertain, so technically “randomly select” just means selecting in a way that the outcome is uncertain. Suppose I want to “randomly select” one of two households, A or B. I could put 10 tickets in a hat, with 9 labeled A and 1 labeled B, and then draw a ticket; this is random selection because the outcome of the draw is uncertain. However, what is often meant by “randomly select” is selecting in a way that each outcome is equally likely. To give households A and B the same chance of being selected, I would put a single ticket for each in the hat. Randomly selecting in a way that each outcome is equally likely could be described more precisely as “selecting uniformly at random”. (We will discuss equally likely outcomes in more detail later.)

Probabilities involving multiple events, such as \(\textrm{P}(A \cap B)\) or \(\textrm{P}(X>80, Y<2)\), are often called joint probabilities. Note that the axioms do not specify any direct requirements on probabilities of intersections. In particular, is not necessarily true that \(\textrm{P}(A\cap B)\) equals \(\textrm{P}(A)\textrm{P}(B)\). It is true that probabilities of intersections can be obtained by multiplying, but the product generally involves at least one conditional probability that reflects any association between the events involved. In general, joint probabilities (\(\textrm{P}(A \cap B)\)) can not be computed based on the individual probabilities (\(\textrm{P}(A)\), \(\textrm{P}(B)\)) alone. We will explore this topic in more depth later.

2.4.5 Probability models

A probability model (or probability space) puts all the objects we have seen so far in this chapter together in a model for the random phenomenon. Think of a probability model31 as the collection of all outcomes, events, and random variables associated with a random phenomenon along with the probabilities of all events of interest (and distributions of random variables) under the assumptions of the model.

There will be many probability measures that satisfy the logical consistency requirements of the probability axioms. Which one is most appropriate depends on the assumptions about the random phenomenon. We will study a variety of commonly used probability models throughout the book.

Perhaps the concept of multiple potential probability measures is easier to understand in a subjective probability situation. For example, each model that is used to forecast the 2024-2025 NFL season corresponds to a probability measure which assigns probabilities to events like “the Eagles win the 2025 Superbowl”. Different sets of assumptions and models can assign different probabilities for the same events. As another example, the weather forecaster on one local news station might report that the probability of rain tomorrow is 0.6, while an online source might report it as 0.5. Each weather forecasting model corresponds to a different probability measure which encodes a set of assumptions about the random phenomenon.

Before moving on, we want to reiterate: Most random phenomenon do not involve equally likely outcomes or uniform probability measures. Even when the underlying outcomes are equally likely, the values of related random variables are usually not. Equally like outcomes or uniform probability measures are the simplest probability measures, and therefore are the ones we typically encounter first. But don’t let that fool you; most interesting probability problems involve non-equally likely outcomes or non-uniform probability measures.

It’s easy to get confused between things like events, random variables, and probabilities, and the symbols that represent them. But a strong understanding of these fundamental concepts will help you solve probability problems. Examples like the following do more than encourage proper use of notation. Explaining to Donny why he is wrong will help you better understand the objects that symbols represent, how they are different from one another, and how they connect to real-world contexts.

2.4.6 Exercises